Exploring AI, One Insight at a Time

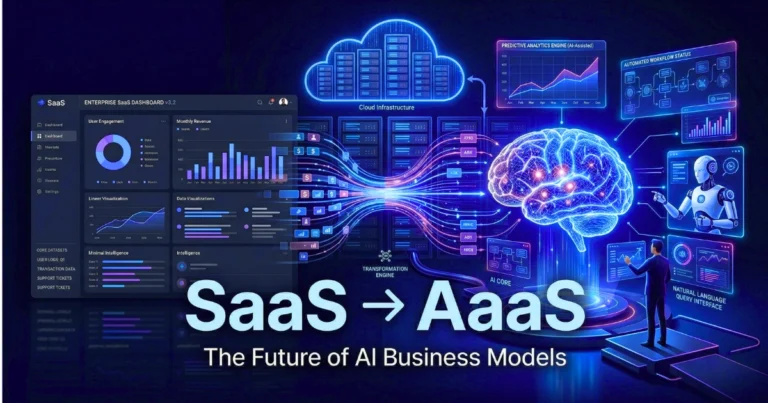

The Rise of Personal AI Assistants: From Chatbots to Full-Time Digital Partners

Let’s get one thing clear—AI chatbots were never the end goal. They were the entry point.

What started as simple website chat bubbles answering FAQs has rapidly evolved into something far more powerful: personal AI assistants that don’t just respond—but act, decide, and execute tasks on your behalf.

This shift is redefining how businesses operate and how users interact with digital systems. We’re no longer moving toward better customer support tools—we’re moving toward always-on digital partners embedded into every workflow.

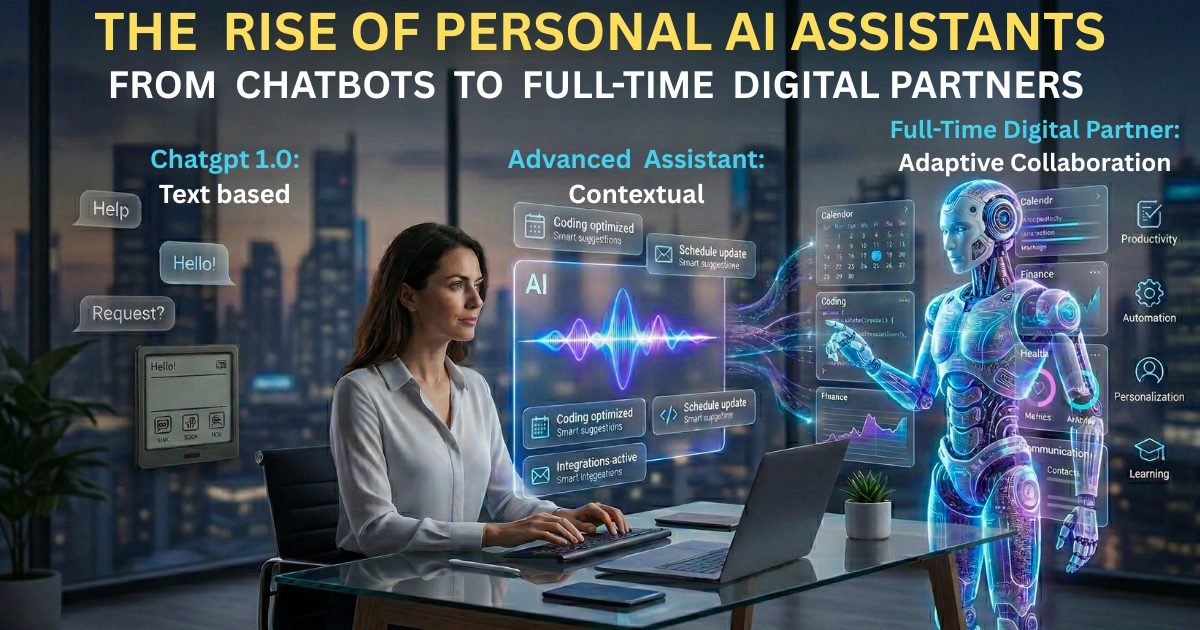

From Reactive Chatbots to Proactive Assistants

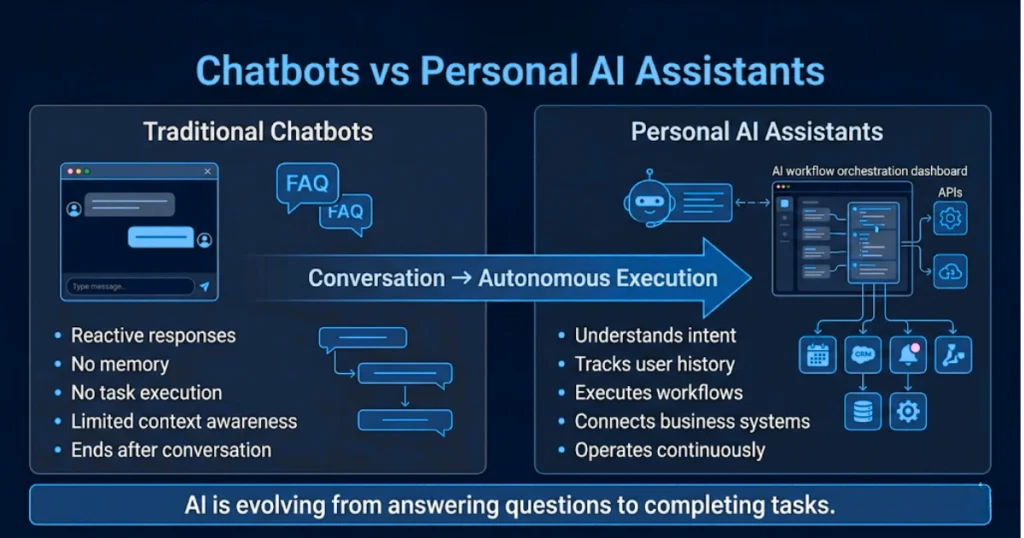

Traditional AI chatbots were built to react. A user asks a question → the chatbot retrieves an answer → conversation ends.

That model worked for basic support. But it had clear limitations:

- No memory of past interactions

- No ability to take action

- No understanding of broader context

Modern AI assistants are fundamentally different. They don’t just wait for prompts. They:

- Track user behavior over time

- Understand intent, not just keywords

- Execute multi-step tasks across systems

The evolution is simple: Chatbot → Assistant → Autonomous Digital Partner

What Exactly Is a Personal AI Assistant?

A personal AI assistant is more than a chatbot embedded on a website. It’s a system that combines:

- Natural language understanding

- Context awareness

- Task execution capabilities

- Integration with business tools

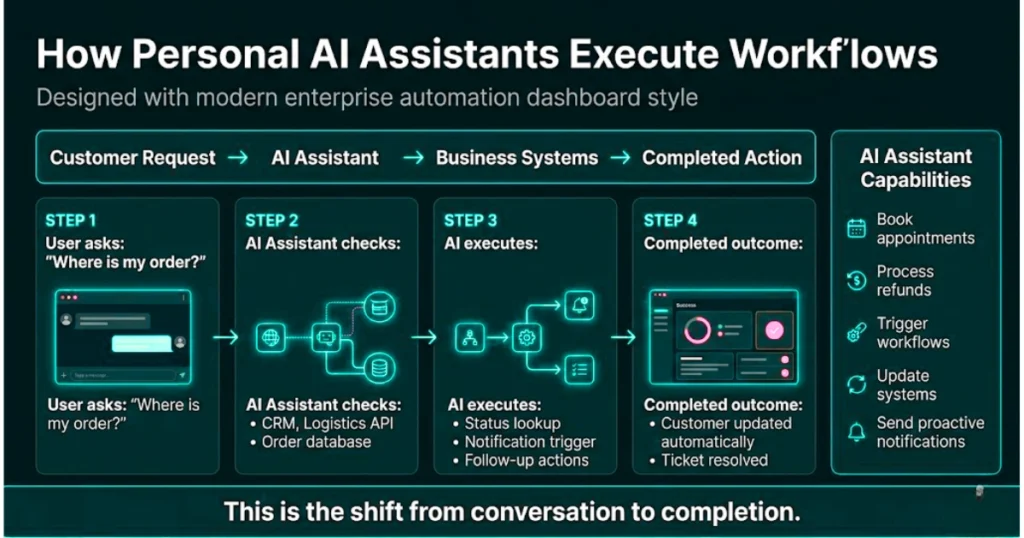

Instead of just answering: “Where is my order?”

It can:

- Check order status

- Notify logistics systems

- Send updates proactively

- Handle follow-ups without user input

This is the difference between conversation and completion.

Where Do AI Assistants Get Their Intelligence?

Earlier chatbot systems relied heavily on static data:

- Website content

- Predefined FAQs

- Uploaded documents

Modern AI assistants operate on a richer data layer:

- Real-time business data (CRM, ERP, support systems)

- Historical user interactions

- External APIs and live signals

They don’t just “know things”—they connect systems. Over time, they improve through:

- Continuous interaction feedback

- Human corrections and overrides

- Behavioral learning patterns

This creates a feedback loop where the assistant becomes more accurate, faster, and more aligned with your business.

Why Businesses Are Moving Beyond Chatbots

The real value of AI assistants isn’t just automation—it’s operational leverage.

For Businesses:

- Reduce support workload without sacrificing quality

- Capture and qualify leads automatically

- Handle thousands of interactions simultaneously

- Lower operational costs at scale

- Free up human teams for high-value work

For Customers:

- Instant, always-available support

- Faster resolution times

- Personalized responses based on history

- Seamless interactions across channels

- Multilingual communication

The result is not just efficiency—it’s a fundamentally better experience.

The Big Shift: From Support Tool to Digital Operator

Here’s where things get interesting.

AI is no longer limited to answering queries. With the rise of agent-based systems, assistants can now:

- Complete transactions

- Book appointments

- Manage workflows

- Trigger backend processes

For example: A customer asks for a refund → The assistant verifies eligibility → Processes the request → Updates the system → Sends confirmation.

No human intervention required. This is the moment where AI stops being a tool—and becomes a digital operator inside your business.

Can AI Assistants Replace Human Teams?

Not entirely—and that’s not the goal. It is well understood that AI won’t replace your team — but it will replace your workflow.

AI excels at:

- Speed

- Scale

- Repetition

- Data processing

Humans excel at:

- Emotional intelligence

- Complex decision-making

- Relationship building

The most effective systems combine both: AI handles the volume → Humans handle the nuance

This “human-in-the-loop” model ensures:

- Brand voice consistency

- Quality control

- Better customer relationships

Cost vs Value: Why Adoption Is Accelerating

AI assistants are no longer enterprise-only tools. Today:

- Entry-level solutions are affordable

- Integration is simpler than ever

- Setup requires minimal technical expertise

But the real calculation isn’t cost—it’s ROI. When an AI assistant can replace repetitive manual work, increase conversion rates, improve response time, and reduce churn, it quickly becomes one of the highest-leverage investments a business can make.

What the Future Looks Like

We are moving toward a world where AI assistants become deeply embedded in daily life and business operations.

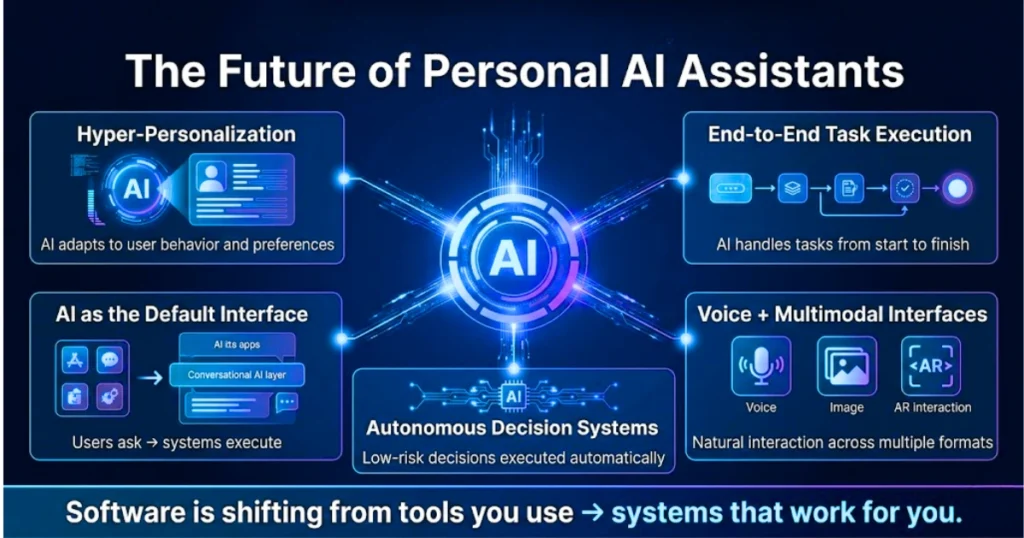

In the next few years, expect:

- Hyper-Personalization: Assistants will adapt tone, recommendations, and behavior based on individual users.

- End-to-End Task Execution: From discovery to purchase to support—handled by AI.

- Voice + Multimodal Interfaces: Users will interact through voice, visuals, and even immersive environments.

- Autonomous Decision Systems: Assistants will make low-risk decisions without human approval.

- AI as a Default Interface: Instead of navigating apps, users will simply ask—and the system will execute.

The Real Competitive Advantage

Most businesses are still treating AI like a feature: a chatbot on the homepage or a support automation tool.

That mindset is already outdated.

The real advantage comes from: Embedding AI into core workflows. Connecting it to real business systems Letting it operate—not just respond

The Bigger Picture

Personal AI assistants are quietly shifting the role of software—from something you use to something that works for you.

This isn’t just an upgrade in customer experience or automation. It’s a deeper change in how execution happens inside a business. Tasks are no longer just supported by tools—they’re being completed by intelligent systems that operate alongside your team.

As this transition accelerates, the advantage will move toward companies that integrate AI into their core workflows rather than treating it as a surface-level feature.

The real shift isn’t about having access to AI. It’s about building systems where AI consistently delivers outcomes.