Exploring AI, One Insight at a Time

AI Won’t Replace Your Team — But It Will Replace Your Workflow

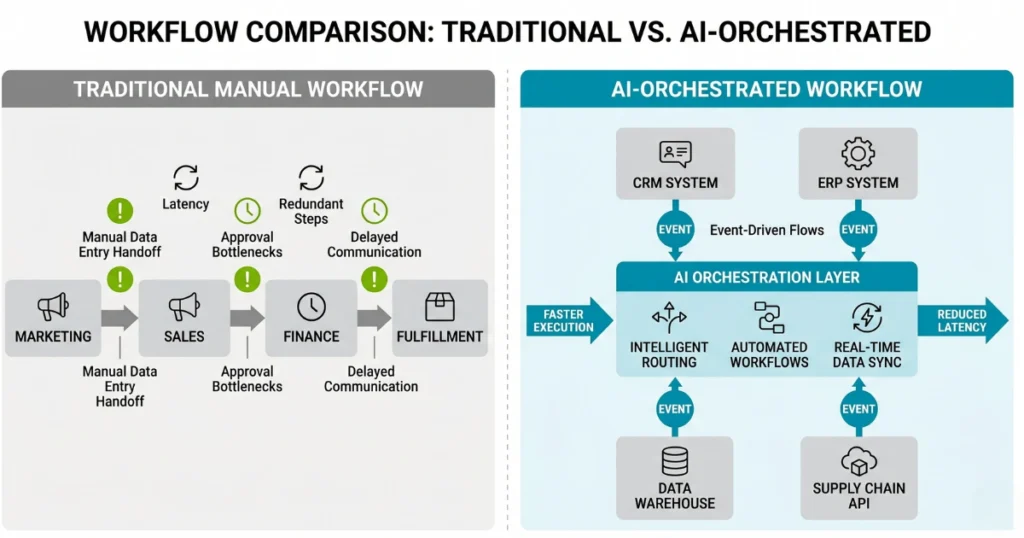

Treating machine learning as a discrete point solution bolted onto outdated middleware is an architectural dead end. Intelligent orchestration layers are aggressively supplanting static enterprise service buses, completely redefining the vectors along which internal work propagates.

By embedding deterministic automation alongside probabilistic models directly within the core event-driven architecture, engineering teams can systematically compress cycle times and eradicate the latency inherent in inter-departmental handoffs.

This forces a transition toward a highly responsive operating model where throughput and cloud-native scaling operate independently of linear headcount growth.

The endgame here is workflow reconfiguration rather than workforce elimination. You are reallocating human capital purely toward systemic judgment, architectural strategy, and hard competitive differentiation.

Synchronous Handoffs Are Architectural Poison

Your teams are wasting cycles waiting on poorly configured execution triggers.

We saw this exact friction manifest last month during a massive enterprise deployment. A deterministic legacy billing API expected a rigid integer format for transaction IDs, but the newly integrated LLM orchestration agent occasionally output the IDs as wrapped JSON objects with trailing whitespace.

The orchestration layer retried the payload continuously. This caused a localized API latency spike of 6,200ms per call that silently generated a $42,000 AWS Lambda compute bill before the DevOps team noticed the dead-letter queue alert on Monday morning.

Do not allow probabilistic systems to directly write to legacy deterministic schemas without a strict validation gateway.

Eliminate synchronous loops where human execution is the primary failure point.

The Operational Friction Tax

Rethinking the workflow requires stripping away the assumption that more volume automatically demands more administrative overhead.

When an intelligent routing layer handles repetitive execution tasks, it absorbs the operational friction that typically demands middle-management intervention.

Your developers and architects are then freed from constantly triaging broken data pipelines or manually approving stage-gate deployments. The intelligence layer dynamically routes the exceptions, isolating the noise from the critical decision paths that actually require human cognition.

Orchestration is the new perimeter.

If your implementation strategy relies on humans copying and pasting outputs between siloed web applications, you are not integrating AI. You are just subsidizing a profoundly inefficient data pipeline that will collapse under its own API polling costs by Q4.