Exploring AI, One Insight at a Time

From Pilot Project to Profit Engine: Making AI Pay Off in the Real World

Vendor Press Releases Die at the API Gateway

Indeed, the latest signal noise touts an “AI Profit Engine” magically ingesting disparate data sources to optimize retail margins.

This is the classic trap of treating machine learning models as infinite data vacuums without regard for the underlying orchestration mechanics.

Consequently, when you attempt to slam unstructured vendor feeds, legacy POS system exports, and real-time social sentiment through a generalized forecasting model, the theoretical ROI evaporates into compute overhead.

The end-to-end approach sold in executive slide decks glosses over the brutal reality of data normalization pipelines choking on mismatched JSON formatting.

Nobody talks about the schema until the pipeline breaks at 3:00 AM on a Friday.

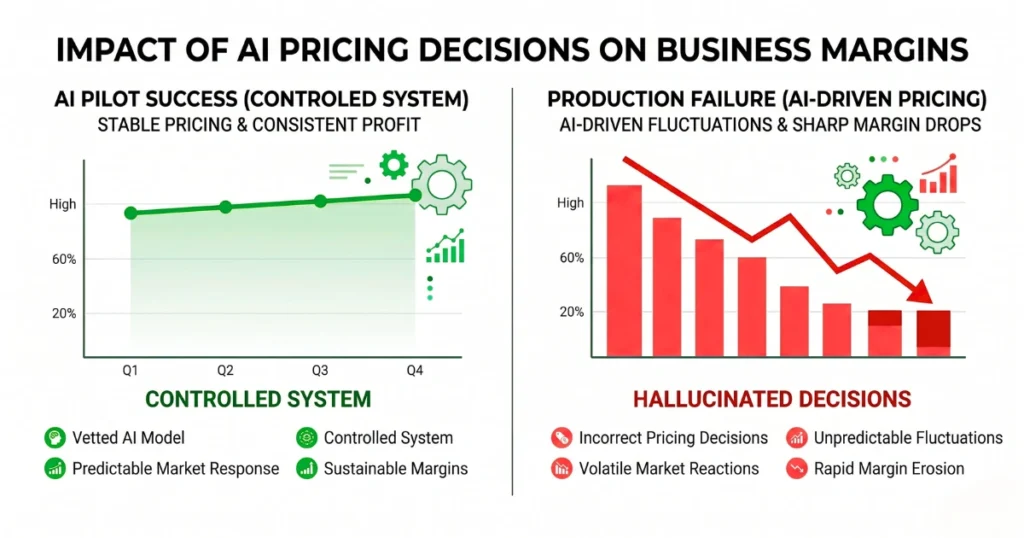

The Margin Destruction of Pricing Hallucinations

However, the assertion that modern infrastructure and strong governance magically turn pilots into profits is a fundamental misrepresentation of how these models behave in production environments.

For instance, we saw this exact architecture fail spectacularly last month when a mid-market retailer bypassed their deterministic pricing rules for an LLM-driven dynamic inventory model.

As a result, every single localized weather perturbation triggered the model to aggressively reposition pricing.

It hallucinated a correlation between a storm surge and discount elasticity, authorizing a 40% markdown on premium rainwear exactly when demand peaked. It incinerated $180,000 in gross margin in six hours before operations finally pulled the plug.

Ignorance of how probabilistic models handle edge cases is an existential threat to your operating margins.

“End-to-End” is Code for Unauditable Black Boxes

Furthermore, trusting an opaque, packaged wrapper to dictate your pricing elasticity across all channels creates a system where accountability goes to die.

Because of this, if the model decides to slash margins on winter coats in November based on a hallucinated correlation in an external data source, your engineering team has no mechanism to trace the specific weightings that caused the catastrophic markdown.

You are trading deterministic control for probabilistic gambling. Production-grade systems require modular RAG architectures where the retrieval logic is strictly decoupled from the generation phase.

Therefore, true governance means hard rate-limiting at the orchestration layer, aggressive fallback protocols for mangled outputs, and firing any consultant who refuses to explain their vector database retrieval strategy.