Exploring AI, One Insight at a Time

Fine-Tuning vs RAG: The $50,000 Mistake Companies Make in AI Projects

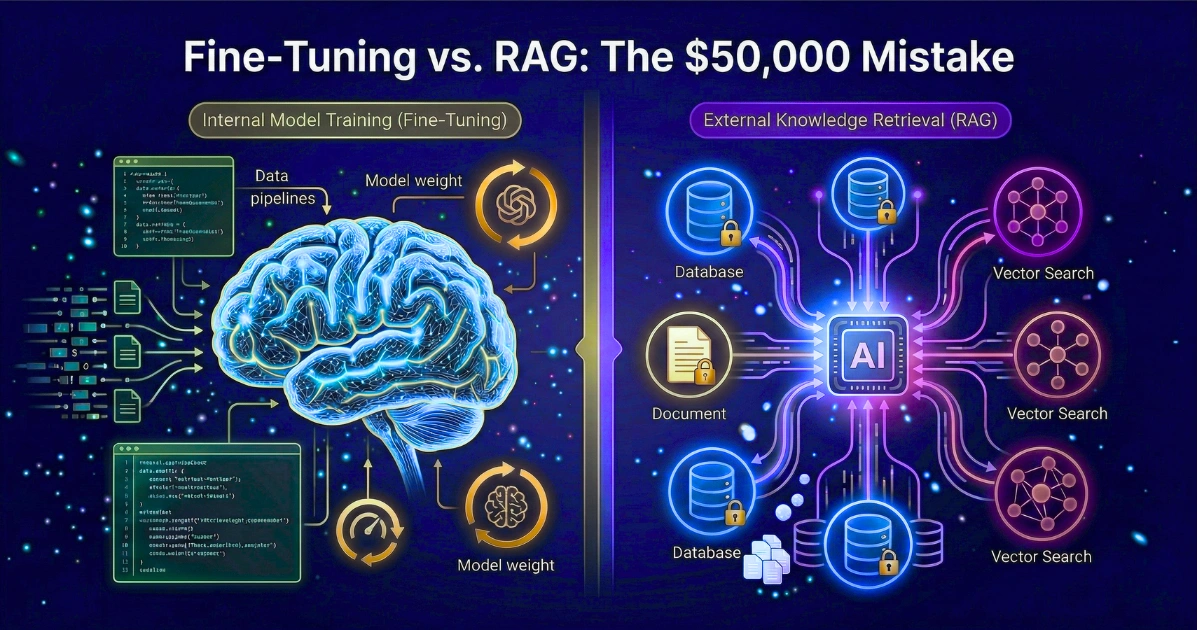

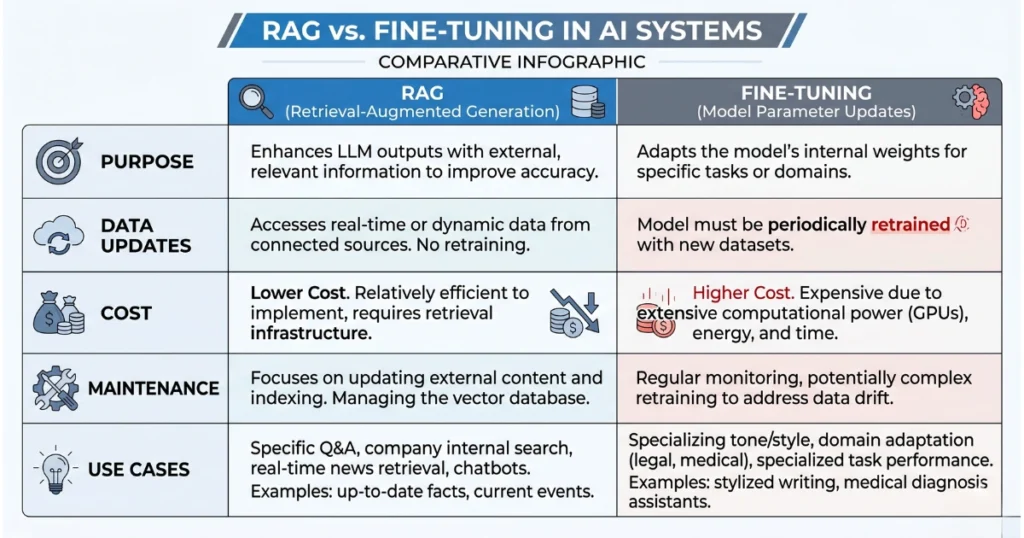

The Fine-Tuning vs RAG debate has nothing to do with model accuracy. Instead, it is a debate about cloud network topology. Amazon Bedrock has quietly engineered a geopolitical zoning disaster.

Executives read whitepapers arguing about retrieval generation versus parameter updating. They completely miss the physical realities of their rented infrastructure.

Ultimately, you aren’t choosing between methodologies. You must choose which data center has available GPUs this quarter.

AWS regional quotas dictate your architecture

Your DevOps lead will discover this harsh reality during a production sprint. Look at the actual model IDs and their regional restrictions. Fine-tuning any new Nova model bottlenecks your team entirely into us-east-1.

However, pivoting to open-weights changes everything. Running a fine-tuning job on llama3-3-70b or claude-3-haiku forces a massive migration. AWS makes you pack your bags and move your data pipeline to us-west-2. This isn’t a multi-model ecosystem. It is a hardcoded coastal divide that actively punishes hybrid deployments.

Cross-region data transfer penalties will bankrupt you

Your team will likely build a standard RAG pipeline. This setup pulls embeddings from a corporate S3 data lake hardcoded in us-east-1. Then, they will run those context chunks against a fine-tuned model in us-west-2.

The application layer assumes API calls are geographically agnostic. Therefore, the system silently brute-forces millions of tokens across the country. Thirty days later, your CFO drops a $51,430 AWS invoice on your desk.

Cross-region data transfer penalties at $0.02 per GB drive this massive bill. Overloaded NAT Gateway fees add up quickly just shuttling vector payloads around. Moving gigabytes of raw JSONL files across time zones creates massive friction. This throttles your application latency to an unusable crawl.

Bedrock fails as an abstraction layer

It was sold as the ultimate solution, but it failed. Stop pretending you can hot-swap foundation models at the API layer. The underlying infrastructure treats them like mutually exclusive physical hardware.

Your strict corporate data governance policy might mandate staying in us-east-1. If so, Meta’s Llama models are completely off the table for fine-tuning. Your multi-model RAG strategy is just a geographic illusion.

Pick your coast carefully. Your entire AI strategy depends on where Amazon racks its silicon.