Exploring AI, One Insight at a Time

Specialized vs. Generalist AI: Which Model Wins the Generative War? (2026 Guide)

Artificial intelligence isn’t a single technology—it’s a spectrum. And right now, the biggest misunderstanding in the market is treating all AI systems as interchangeable.

They’re not.

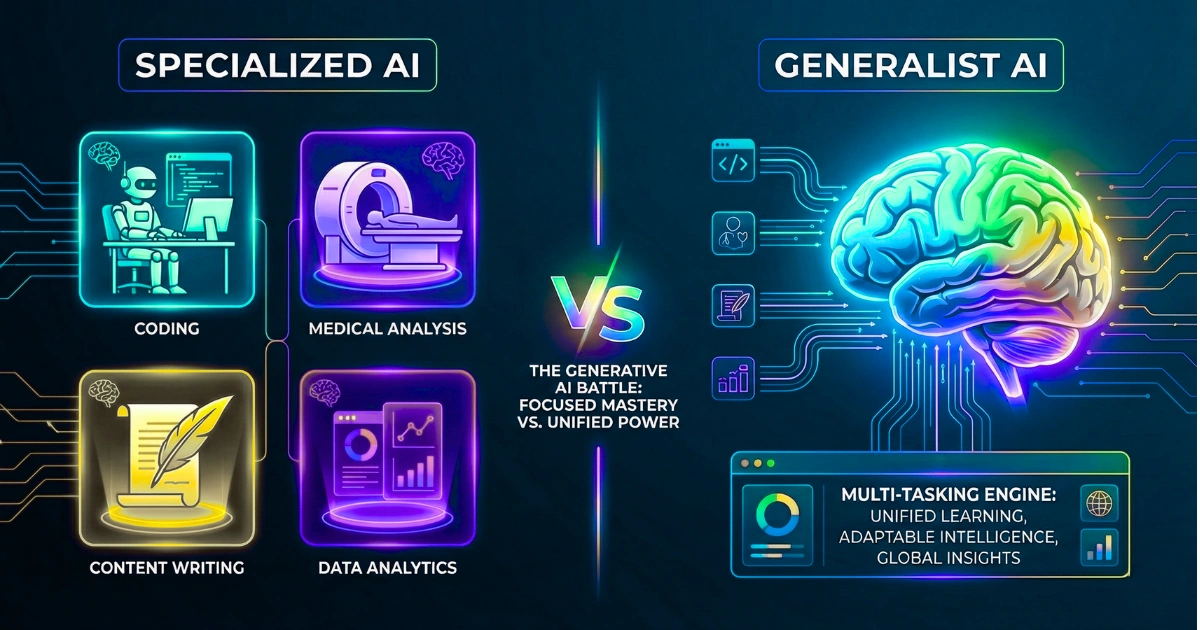

At the highest level, the battle shaping the future of AI comes down to two fundamentally different approaches:

- Specialized AI (Narrow AI) → built to dominate one task

- Generalist AI (General AI / AGI direction) → built to handle many tasks

Both are powerful. Both are evolving fast. But they win in very different scenarios.

Let’s break this down properly—without hype, without vendor marketing noise.

The Core Difference: Depth vs. Breadth

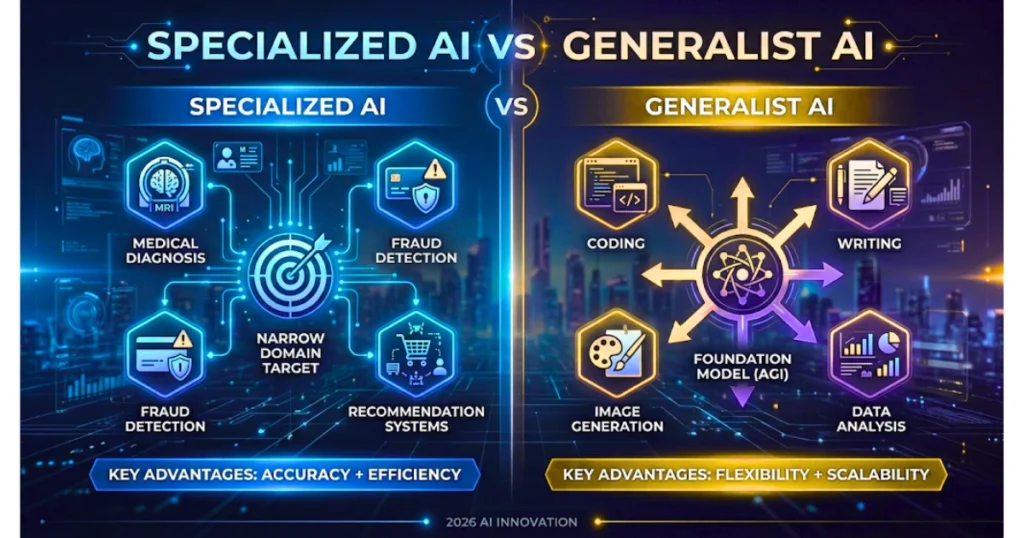

Specialized AI is designed for precision. It solves a clearly defined problem—exceptionally well. Think:

- Fraud detection systems

- Medical imaging diagnosis

- Recommendation engines

These models are trained on focused datasets, optimized for specific outputs, and engineered for predictability.

Generalist AI, often associated with the path toward Artificial General Intelligence (AGI), is designed for flexibility. It can:

- Write code

- Analyze documents

- Generate images

- Answer questions across domains

Instead of mastering one task, it aims to adapt across many.

The trade-off is simple:

- Specialized AI = accuracy + efficiency

- Generalist AI = flexibility + scalability

Training Data: Massive vs. Targeted

One of the biggest differences lies in how these systems are trained.

Generalist AI

- Trained on massive, diverse datasets

- Requires billions (or trillions) of parameters

- Learns patterns across domains (language, code, images)

This gives it versatility—but at a cost:

- High compute requirements

- Longer training cycles

- More complexity

Specialized AI

- Trained on narrow, domain-specific datasets

- Optimized for a single objective

Result:

- Faster training

- Lower infrastructure cost

- Higher reliability in its niche

👉 Key insight: Generalist models learn everything moderately well. Specialized models learn one thing extremely well.

Accuracy: Who Performs Better?

This is where reality cuts through hype.

Specialized AI Wins on Precision

In well-defined environments:

- Accuracy often reaches 95–99%

- Performance is consistent and predictable

Example: A medical AI trained only on tumor detection will outperform a general model almost every time.

Generalist AI Wins on Adaptability

Across varied tasks:

- Accuracy ranges 70–90%

- Performance depends heavily on prompting and context

Generalist systems can:

- Switch tasks instantly

- Handle ambiguity

- Work across domains

But they sacrifice depth for breadth.

Bottom line: If failure is expensive → choose specialized AI. If flexibility matters → choose generalist AI.

Compute & Infrastructure: Heavy vs. Efficient

Generalist AI

- Requires large-scale infrastructure (GPUs, cloud clusters)

- Expensive to train and run

- Energy-intensive

These systems are engineering-heavy and not always cost-efficient for narrow use cases.

Specialized AI

- Can run on lighter infrastructure

- Easier to deploy in production

- More cost-effective for businesses

This is why most real-world systems today rely on specialized AI under the hood.

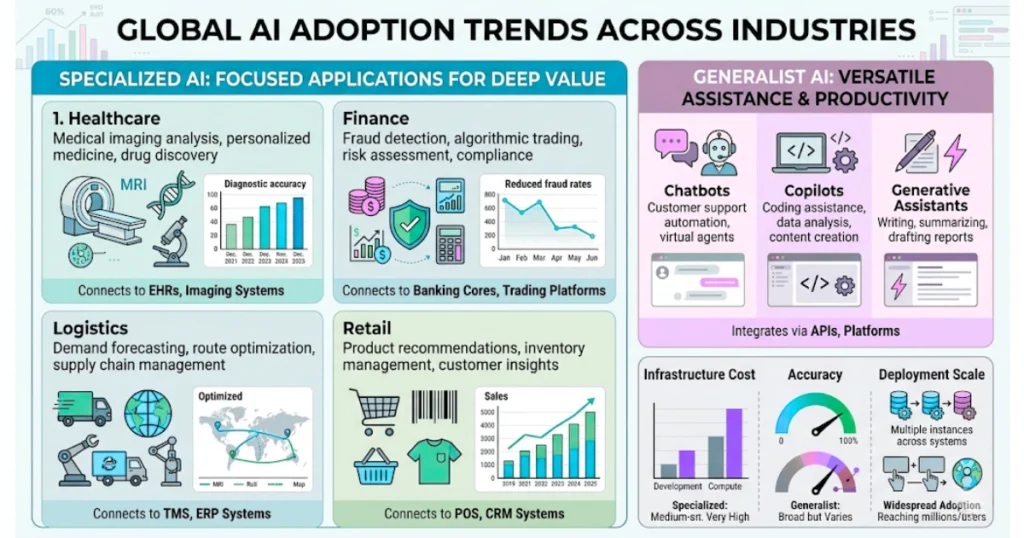

Market Reality: What Businesses Actually Use

Despite all the hype around AGI…

Over 90% of real-world AI deployments today are specialized AI.

Industries using it heavily:

- Healthcare (diagnostics, imaging)

- Finance (fraud detection, risk scoring)

- Retail (recommendations, demand forecasting)

- Logistics (route optimization)

Generalist AI Status:

- Still evolving

- Limited real-world autonomy

- Mostly used as a layer on top of systems, not the system itself

Market penetration is still very low compared to specialized AI.

Where Generative AI Fits In

Here’s where things get interesting.

Generative AI (like text, image, or code generation systems) sits somewhere in between. It behaves like a generalist interface, but often relies on:

- Specialized tools

- APIs

- External systems

Example: A generative AI system writing marketing content uses general language understanding, but may call specialized tools for:

- SEO analysis

- Data retrieval

- Image generation

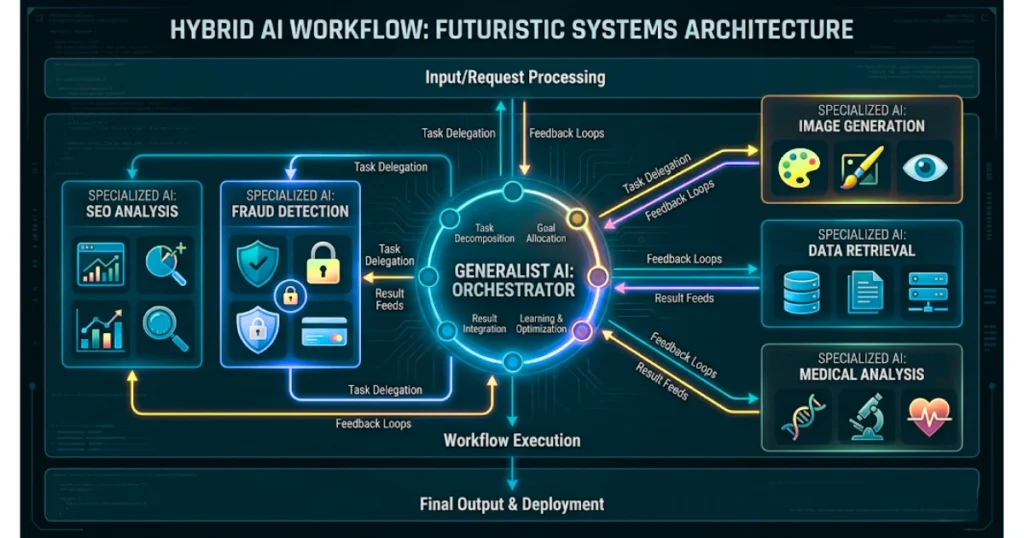

This creates a hybrid model architecture:

- Generalist brain

- Specialized execution layers

This is the direction modern AI systems are heading.

The Future: Convergence, Not Competition

The idea that one will replace the other is flawed. The real future looks like this:

1. Generalist AI as the Orchestrator

- Handles reasoning

- Breaks down tasks

- Decides what needs to be done

2. Specialized AI as Executors

- Perform specific actions

- Deliver high-accuracy outputs

- Handle domain-critical operations

Think of it like a company:

- Generalist AI = Manager

- Specialized AI = Skilled employees

You need both.

Business Strategy: Which Should You Choose?

It depends on your objective.

Choose Specialized AI if:

- You need high accuracy

- Your problem is clearly defined

- You want cost-efficient deployment

- Risk tolerance is low

Choose Generalist AI if:

- You need flexibility across tasks

- Workflows are dynamic or unpredictable

- You want faster experimentation

- You’re building multi-step systems

Best Strategy (2026 and beyond): Combine both. Use generalist AI for thinking. Use specialized AI for execution.

The Real Competitive Advantage

The companies winning right now are not choosing sides. They are:

- Integrating generalist AI into workflows

- Connecting it to specialized systems

- Building controlled, layered architectures

Final Verdict: Who Wins?

Neither.

Specialized AI dominates execution. Generalist AI dominates orchestration. The real winner is the system that combines both effectively.

Closing Thought

The conversation shouldn’t be: “Which AI is better?”

It should be: “Where does each model create the most leverage in my workflow?”

Because in the generative era, productivity doesn’t come from choosing the most powerful model. It comes from designing the right system around it.