Exploring AI, One Insight at a Time

AI Strategy for Businesses: How to Win While Competitors Waste Budget

We are entering an era of pervasive, relentless compute.

Foundation models are actively restructuring every business layer, operational silo, and market dynamic. This forces technical leaders into an immediate, high-stakes binary: adapt or become obsolete.

Market hysteria regarding whether to rely on closed APIs or open-source custom architectures buries the clear path forward.

Engineering teams remain paralyzed by the choice between large legacy models and smaller, optimized weights. Your competitors are currently burning their runway fighting this battle on the wrong front.

Human Attention is a Depreciating Asset

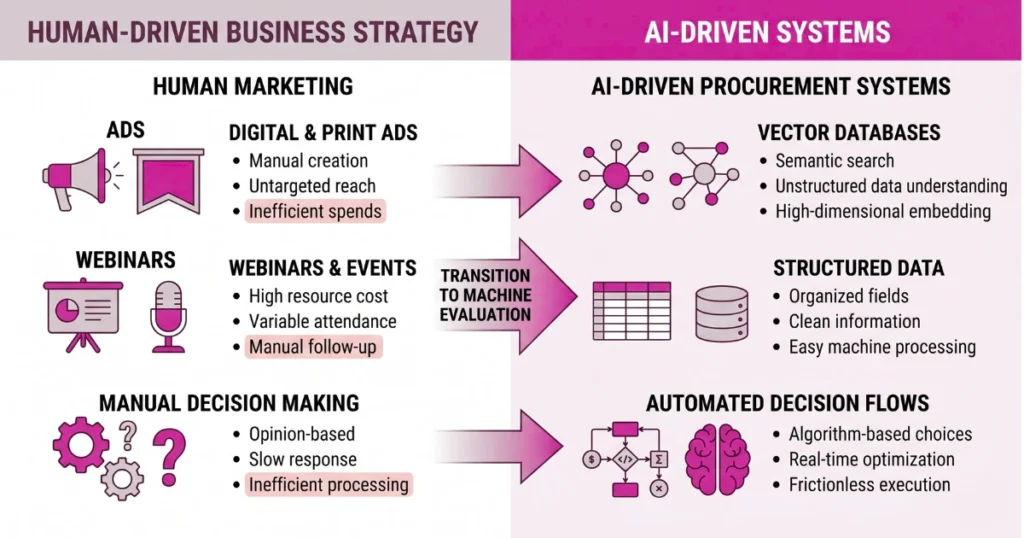

Your marketing department is wasting budget.

The market is rapidly phasing human buyers out of the enterprise procurement loop. Executives now delegate purchasing decisions to autonomous agents and LLM-driven discovery tools.

These algorithmic buyers do not watch webinars or read promotional copy. You must fundamentally engineer a growth strategy built explicitly for these autonomous advisors.

Instead, shift your capital expenditure entirely away from human-targeted advertising. Aggressively secure placement within the proprietary data pipelines and vector databases that feed enterprise AI tools.

Unstructured Documentation is Architectural Sabotage

Algorithms lack the capacity for brand loyalty.

If an enterprise LLM cannot parse your product specifications, metadata, and pricing arrays deterministically, it will bypass your architecture.

For example, we saw this exact failure mode cripple a leading SaaS provider last Tuesday. They became just another casualty of the enterprise AI sandbox illusion.

Specifically, a broken YAML schema and an un-indexed markdown table caused an external agent’s web-scraper to infinitely loop.

This specific failure generated an undetected $14,200 AWS Lambda bill for the prospect from simple failed parsing attempts. The prospect immediately ejected the vendor from a $2M contract consideration.

Optimize your product’s metadata to be rigidly machine-readable. Define a strict, unforgiving set of “Algorithm KPIs” across the board. These metrics must measure parse rates, context-window efficiency, and semantic search visibility.

The Silicon Shortage Dictates Model Topologies

Compute is finite.

- The ongoing global chip shortage mandates a brutal pivot away from sprawling, general-purpose inference.

- Small, specialized, domain-specific models guarantee better latency and strictly controlled inference costs.

- Relying on billion-parameter APIs exposes your core product to unannounced throttling and catastrophic downstream latency delays.

Your survival requires building for machines. They evaluate your stack solely on schema clarity and token efficiency. If an agent hits an undocumented rate limit or fails to vectorize your catalog in sub-200 milliseconds, your competitor gets the contract.