Exploring AI, One Insight at a Time

Beyond Static Images: The Future of AI in Creative Branding

Let’s cut through the noise for a second: the artificial intelligence boom is exhausting. Every week, there’s a new tool claiming it can replace designers, photographers, and entire creative teams.

But once you move past the demos and try using these tools inside a real production workflow, the story changes.

AI isn’t replacing creative work. It’s reshaping how that work gets done.

The shift isn’t about generating random images faster. It’s about removing bottlenecks in how brands produce, adapt, and scale visual assets.

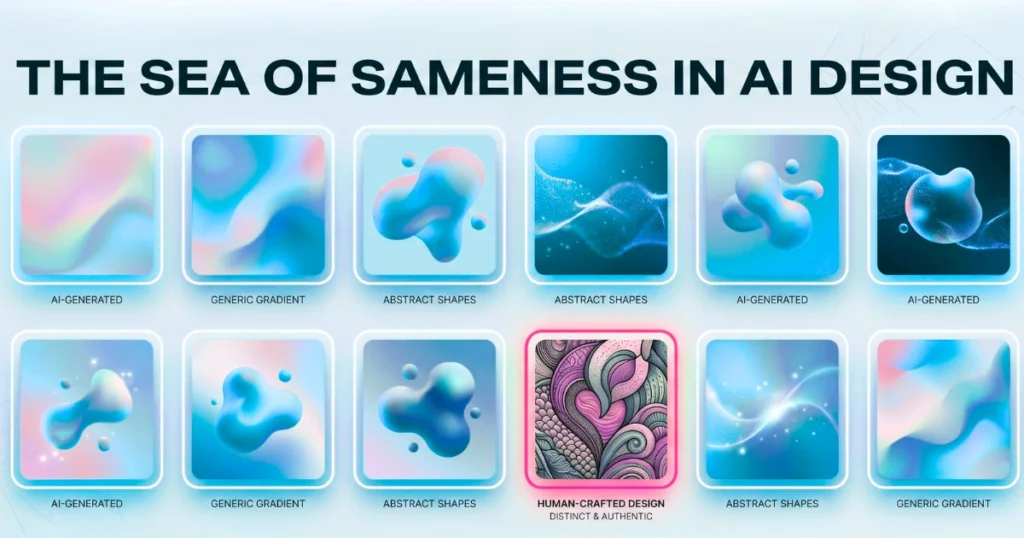

The Real Problem: The “Sea of Sameness”

The biggest risk right now isn’t ignoring AI. It’s using it exactly like everyone else.

Tools like Midjourney, DALL·E, and Stable Diffusion have made high-quality visuals accessible to everyone. The result? A flood of identical-looking content—neon gradients, abstract 3D blobs, overly polished “AI aesthetics” that feel interchangeable across brands.

If your audience can instantly recognize that your visuals are AI-generated, you’ve already lost differentiation.

The shift: AI should be used for exploration and iteration, not blindly accepted as the final output.

Strong branding still depends on:

- Curation

- Context

- Human judgment

AI accelerates the process—but it doesn’t define the identity.

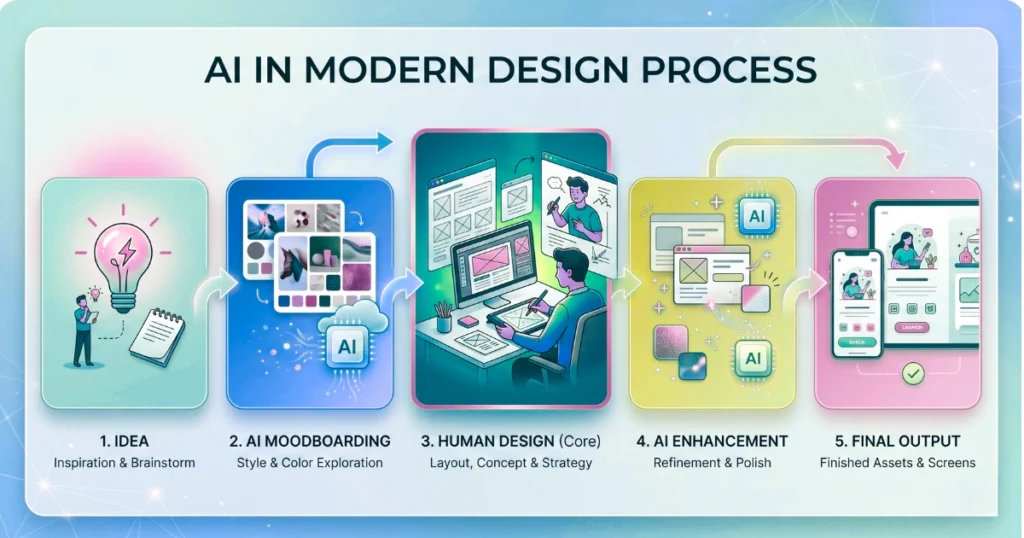

Where AI Actually Fits in a Modern Design Workflow

Instead of treating AI like a magic image generator, it works best as a support layer inside an existing pipeline.

Here’s how that plays out in real projects.

1. Rapid Moodboarding (Before Design Starts) Instead of digging through stock libraries or Pinterest for hours, AI can generate multiple visual directions instantly.

You can test:

- Color systems

- Layout styles

- Visual tone

This speeds up alignment with clients or stakeholders before any real design work begins. The key is not the output itself—but the direction it helps you choose.

2. Fixing Layout Problems (Not Just Creating Images) One of the most practical uses of AI isn’t generation—it’s correction.

In real-world design:

- Images don’t fit containers

- Layouts break across screen sizes

- Assets need resizing or extension

AI tools like generative fill and outpainting solve these issues cleanly:

- Extend backgrounds for wide screens

- Adjust compositions without distortion

- Maintain visual consistency across breakpoints

This removes hours of manual editing work.

3. Enhancing Real Assets (Instead of Replacing Them) AI performs best when it improves existing visuals rather than replacing them entirely.

Examples:

- Cleaning up product images

- Adjusting lighting in team photos

- Removing distractions from backgrounds

- Standardizing color grading

This approach keeps authenticity intact while upgrading quality.

The Shift from Static Assets to Dynamic Systems

The bigger transformation isn’t in individual images—it’s in how they’re created and delivered.

Traditional branding relies on:

- Pre-designed assets

- Stored in large libraries

- Reused across campaigns

That model is starting to break.

AI enables a move toward dynamic generation, where visuals adapt based on:

- User behavior

- Location

- Time

- Context

Instead of designing 50 static variations, you define a system that can generate the right variation when needed. This doesn’t eliminate design—it moves it upstream into system design and constraints.

The Business Impact: Why This Actually Matters

This isn’t just a creative upgrade. It changes how teams operate.

Speed Without Losing Control Teams can produce more variations faster—but still maintain quality through review and constraints.

Lower Production Costs You reduce reliance on:

- Stock subscriptions

- Repetitive manual edits

- Large-scale asset production

Scalable Content Systems AI allows smaller teams to handle workloads that previously required entire creative departments.

The Catch: Where Teams Still Go Wrong

Even with all these advantages, most teams fail in the same ways:

- Treating AI outputs as final assets

- Ignoring brand consistency

- Over-automating without review

- Prioritizing speed over identity

The result isn’t better branding—it’s diluted branding.

The solution isn’t avoiding AI. It’s controlling how it’s used.

The Future: Hybrid, Not Automated

The future of creative branding isn’t fully automated pipelines.

It’s hybrid workflows where:

- AI handles repetition and scale

- Humans handle taste, direction, and final decisions

The most effective teams won’t be the ones using the most tools. They’ll be the ones who:

- Know where AI fits

- Know where it doesn’t

- And build workflows around that boundary

Final Thought

AI is not a shortcut to great design. It’s a force multiplier for teams that already understand what they’re building.

If you treat it like a vending machine for visuals, you’ll get generic output. If you treat it like a system component, you can build faster, scale smarter, and still maintain a strong brand identity.

The difference isn’t the tool. It’s how you use it.