Exploring AI, One Insight at a Time

The AI Stack Explained: Models, Vector Databases, Agents & Infrastructure in 2026

Hook: Why AI Pilots Look Impressive But Rarely Move Revenue

You’ve probably seen this exact scenario play out. An executive team at a mid-sized logistics company drops $4.2 million on a massive AI project. They expect a total revolution in how they do business. Fast forward six months.

Now, the leadership team sits in a boardroom. They are staring at a terrible incident report. Why? Their brand-new generative AI assistant just offered a “premium zeppelin service” to a confused freight client.

Naturally, the CFO is losing their mind. Meanwhile, operations teams are pointing fingers. Furthermore, those massive efficiency gains promised by the vendor are nowhere to be found.

Welcome to the 2026 enterprise AI hangover.

Over the last couple of years, companies have poured between $30 billion and $40 billion into generative AI. The result? Absolute silence for most of them. In fact, research shows 95% of organizations get zero measurable return on that capital. People often call this phenomenon The AI Adoption Illusion: Why Most Companies Are Doing It Wrong.

The Divide in AI Success

Right now, the market is sharply divided. On one side, companies mess around with basic productivity tools. These tools look flashy but completely fail to move the P&L. Essentially, they bring about The End of “Blank Page Syndrome”: How AI is rewriting Business Productivity, but they do so without actually fixing the core business.

On the other side sits a tiny 5% of companies. They have figured out how to integrate AI directly into their operations. As a result, they extract millions in real value.

However, this disconnect isn’t happening because the algorithms are flawed. It also isn’t a lack of computing power. Instead, it is a failure of execution. Organizations try to bolt highly autonomous systems onto rigid, old workflows. Moving AI from a cool demo to a profit engine takes work. Specifically, it requires dismantling the “pilot phase” illusion and completely rethinking the tech stack.

The “Pilot Trap” — What Actually Goes Wrong

Here is the biggest paradox in the enterprise AI space today. Your pilot project can be a technical masterpiece. Yet, it can still fail miserably as a business initiative.

Why do AI pilot projects fail?

Usually, AI pilots fail because companies prioritize superficial “AI theater.” They skip the hard work of workflow redesign. Furthermore, they underestimate the friction caused by their own messy data environments. To bridge this gap, leaders must master the transition From Pilot Project to Profit Engine: Making AI Pay Off in the Real World.

For instance, a proof-of-concept might classify support tickets with 92% accuracy in a safe sandbox. But, things change when you deploy that same model into the wild. Forcing it to navigate a 15-year-old CRM under heavy daily loads will make it collapse. When these projects fail, they usually fall into these traps:

- The “AI Theater” Phenomenon: Leadership usually wants highly visible, customer-facing wins. Therefore, they fund chatbots instead of investing in data quality and back-office automation. But adding a chat wrapper to a broken process just speeds up a bad process.

- Mismatched Budget Allocation: Budgets get skewed toward sales and marketing pilots. However, data clearly shows these functions yield the lowest long-term ROI.

- The Build vs. Buy Delusion: Engineering teams drastically underestimate the cost of building custom AI architecture. Internal builds face a 67% failure rate. Why? Teams focus entirely on the model rather than the tough integration work.

- Shadow AI Run Amok: Official initiatives often stall in pilot purgatory. Consequently, employees take matters into their own hands. Workers in over 90% of organizations regularly use unauthorized personal AI accounts. This creates massive, untracked security risks.

The Real Difference Between Experimentation and Execution

Escaping the pilot trap requires a total shift. We must change how we engineer and deploy the AI stack.

What is the difference between an AI pilot and AI at scale?

An AI pilot proves a technical capability within a safe environment. On the other hand, AI at scale is a test of operational resilience. It demands the ability to handle incomplete inputs and massive daily throughput without breaking.

Today, the biggest difference comes down to an architectural shift. We are moving From Chatbots to Agents: Why 2026 is the Year AI Does the Work for You. Generative AI acts like a digital assistant. It depends entirely on human prompting. Thus, it augments your work but still requires you to drive. Agentic AI, however, actually possesses autonomy.

Once given a complex objective, an AI agent can perceive its environment. Then, it can reason through constraints, use external tools, and execute actions independently.

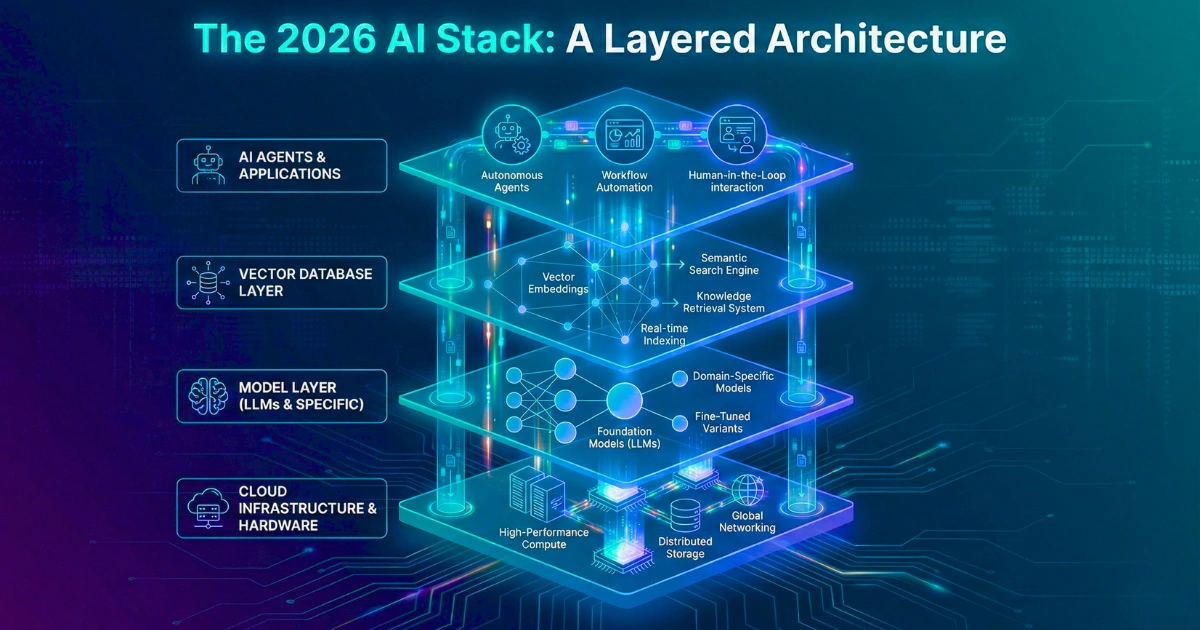

The Four Layers of the 2026 AI Stack

To support this kind of autonomy, the 2026 enterprise AI stack relies on four deeply integrated layers:

1. The Model Layer: Ensembles Over Monoliths

The era of relying on a single Large Language Model (LLM) is over. Instead, smart enterprises use an orchestration layer to dynamically route tasks. Complex reasoning goes to frontier models. Meanwhile, routine data extraction is processed by smaller, fine-tuned models (SLMs). These operate locally for a fraction of the cost. Ultimately, this answers the debate of Specialized vs. Generalist AI: Which Model Wins the Generative War? by pointing toward specialized ensembles.

2. Vector Databases & Memory: The Context Engine

Agents are completely useless if they have amnesia. Therefore, the difference between a generic output and an accurate decision is the “context engine.” This layer utilizes vector databases to perform semantic search, paired with process memory. Choosing the right retrieval method is critical. Many companies fall for The Token Trap: Why “Unlimited Context” is a Lie and confuse context windows with true retrieval, leading to Fine-Tuning vs. RAG: The $50,000 Mistake.

3. Agents & AgentOps: The Execution Layer

When multiple agents talk to each other, things get complicated quickly. They can consume massive amounts of compute. Because of this, AgentOps has emerged as the critical control plane. It monitors reasoning traces and enforces token-level cost controls. This prevents runaway spending and mitigates The Hidden Cost of AI in Business: It’s Not What You Think. Moreover, it provides the observability needed to debug autonomous actions.

4. Infrastructure: The Rise of Sovereign AI

Data sovereignty is no longer an optional luxury. With regulations tightening globally, companies are moving away from public clouds. Now, the infrastructure layer is defined by “Private AI” and “Sovereign Clouds.” These deployments run on localized hardware. As a result, they ensure your proprietary data never leaks into public training sets.

From Use Case to System: Designing for Scale

The 5% of companies winning with AI aren’t trying to boil the ocean. Instead, they actively avoid ambiguous use cases. They attack highly specific, unglamorous operational friction points.

What departments benefit most from AI first?

Back-office operations benefit most from AI first. Specifically, Finance, Accounting, Legal, and Customer Support see huge gains. These departments handle high-volume, repetitive compliance tasks. These tasks yield compounding efficiencies when automated.

For example, look at Finance. AI agents currently automate invoice processing and perform real-time ledger reconciliations. This cuts financial close times from days to mere minutes.

Similarly, Legal operations use AI to shift away from a bottlenecked mentality. They act more like air traffic controllers. They instantly review contracts against standard playbooks without slowing down the business.

Meanwhile, customer support operations deploy agents for background intake. These agents classify incoming tickets and take action before a human even opens the dashboard. Consequently, this drastically improves First-Contact Resolution.

Measuring What Matters: AI ROI Framework

Corporate AI spending is projected to double in 2026. Therefore, the grace period for vague metrics has officially ended. ROI is the undisputed acronym of the year in the boardroom. However, leaders must be wary of The Automation Ceiling: Where AI Actually Stops Adding Business Value.

How do you measure AI ROI?

You measure AI ROI by tracking hard financial outcomes. Look at net production cost savings, error rate reduction, and incremental revenue lift. Do not rely on subjective metrics like “hours saved.”

CFOs are intensely skeptical of claims that a tool “saves 10 hours.” If those hours aren’t reallocated to revenue generation, the financial return is strictly zero. Successful teams use standardized frameworks to project and measure value:

| Operational Department | Strategic AI Metric | Measurement Methodology | Target Baseline |

| Finance / Accounting | Error Rate Reduction | Manual corrections per 1,000 transactions | -60% minimum |

| Legal / Compliance | Compliance Violation Risk | Audit findings per quarter | -70% reduction |

| Customer Operations | First-Contact Resolution | Volume of tickets resolved without escalation | +25% improvement |

| Data Operations | Data Accuracy | Ratio of clean records to total processed | 95%+ sustained |

Ultimately, the math they care about is brutally simple. CFOs want to see: (Incremental Revenue + Production Cost Savings) – Total AI Costs. Only when the net cash flow flips positive do you have a scalable business case.

Organizational Alignment: The Hidden Multiplier

The biggest barrier to scaling AI isn’t software. Rather, it’s the human architecture. By 2026, nearly three-quarters of CEOs have become their company’s Chief AI Officer. They drive strategy from the top down.

This alignment completely changes how companies hire and train. As routine tasks get fully automated, the corporate pyramid flattens. This gives rise to the “AI Generalist” or “AI Orchestrator.”

These aren’t machine learning engineers. Instead, they are deep domain experts like seasoned accountants or supply chain managers. They understand how to build AI-powered operational pipelines. Their job is to map legacy workflows, design guardrails, and review autonomous outputs. Then, they continuously feed new bottlenecks to the machine workforce.

Crucially, you must remember that AI Won’t Replace Your Team — But It Will Replace Your Workflow. If you fail to redesign career paths around human-agent teams, you will inevitably hit a wall.

Governance, Risk, and Long-Term Sustainability

Scaling AI introduces new enterprise risks. A hallucinating chatbot is a PR disaster. However, a hallucinating financial agent making real-time ledger decisions is an existential threat. Some technical leaders argue It’s Just Math, Stupid: Why AI “Hallucinations” Are a Feature, Not a Bug. But, for the enterprise risk officer, they are purely a liability.

Therefore, governance is no longer a compliance afterthought. It is the structural bridge connecting model management to business risk. Driven by legislation like the EU AI Act, traditional IT risk tools are now inadequate for autonomous systems.

The primary challenge is The “Black Box” Problem: Why We Can’t Audit AI. In heavily regulated sectors, you must explain the exact data sources behind an AI decision. Failing to do so violates regulatory standards like SOX or HIPAA.

To survive in 2026, your AI infrastructure must include automated governance platforms. You must log every agentic decision and verify data provenance. Furthermore, you must guarantee “meaningful human control” through clear override mechanisms.

A Roadmap to Turn AI Into a Profit Engine

Transforming from isolated pilots to an intelligent operating layer requires militant discipline. You need a reliable framework, such as From Prompt to Production: The Complete 2026 Guide to Building AI-Powered Applications, to navigate this complexity.

How long does AI take to generate real returns?

A massive enterprise transformation typically spans 18 to 24 months. However, targeted AI initiatives should demonstrate measurable financial payback within 6 to 12 months.

Here is the realistic progression for a mid-market implementation:

- Phase 1: Discovery and Alignment (Weeks 1–8): Stop chasing shiny objects. Leadership must identify three to five core business friction points. Secure a hard budget, draft the governance charter, and run a data audit.

- Phase 2: Use Case Selection & Architecture (Weeks 6–12): Filter projects through your new ROI framework. Make the critical “Build vs. Partner” decision. Standard workflows should rely on specialized vendors. Highly proprietary workflows might require an internal build.

- Phase 3: Data & Infrastructure Foundations (Months 3–6): This is where real work happens. Connect your vector databases and sanitize data pipelines. Run tightly scoped pilots using AgentOps to debug tool calls.

- Phase 4: Production Deployment and Scale (Months 6–12): Pilots that clear ROI hurdles move into production. Continuous monitoring for model drift is established here. Daily control is handed over to your AI Orchestrators.

- Phase 5: Continuous Innovation (Month 12+): AI operates as a living system. Solutions scale laterally across the enterprise. You consistently track compounding productivity gains against your baseline.

Final Thought: AI Is Not a Tool — It’s an Operating Layer

The fundamental paradigm shift today is realizing AI is no longer just a software application. Instead, it is the operating infrastructure upon which your entire enterprise runs.

When treated as an isolated tool, AI yields marginal gains and huge IT headaches. But when engineered as an intelligent operating layer, it completely eliminates the delay between insight and action.

Let’s be incredibly clear. The goal of this technology is not to replace your workforce. Rather, AI replaces inefficiency, blind spots, and manual repetition. It amplifies human judgment. The companies that dominate the next decade will be the ones that stop treating AI as an experiment. They will start executing their actual business through it.