Exploring AI, One Insight at a Time

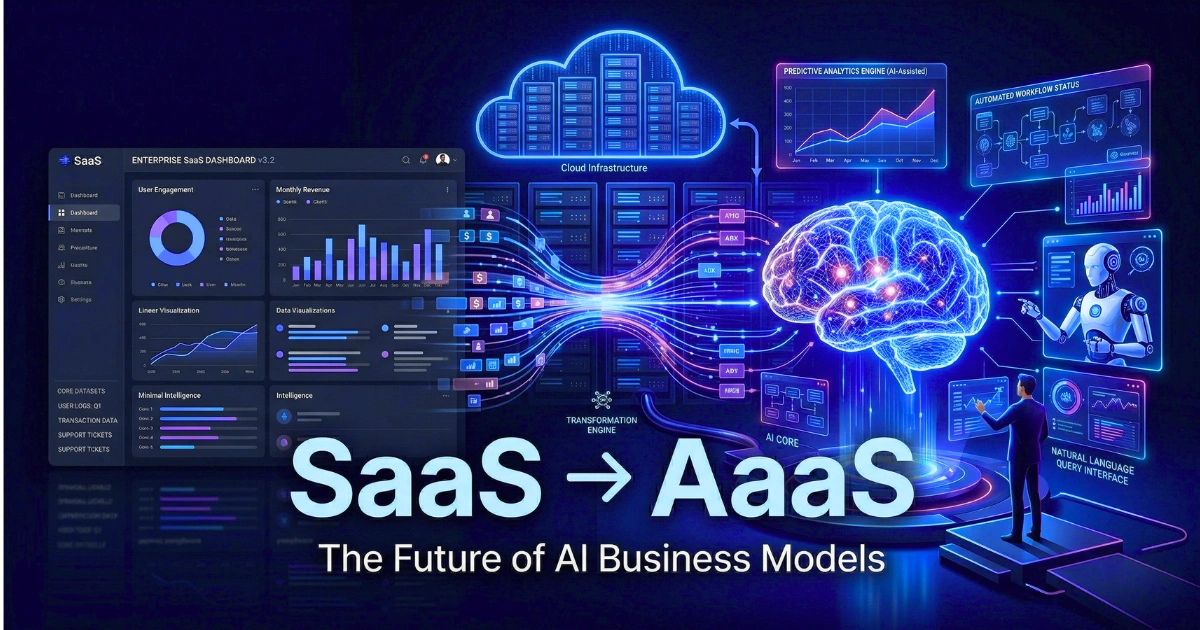

From SaaS to AaaS: Why ‘AI-as-a-Service’ Is the Next Big Business Model

Quick Answer

The shift from SaaS to AaaS represents the transition from renting static software operated by human employees to deploying autonomous digital agents that execute complex workflows.

While traditional SaaS digitizes business processes through seat-based licensing, the AI as a Service business model delivers outcome-driven digital labor powered by continuous reasoning loops.

The Architectural Shift: Beyond the Seat License

In early 2026, the enterprise software sector experienced a definitive financial recalibration. The massive market capitalization corrections among legacy software providers were not triggered by macroeconomic downturns.

They were a direct response to the deployment of autonomous digital agents in production environments. Institutional investors have recognized that the traditional Software-as-a-Service (SaaS) paradigm faces systemic pressure from an entirely new architecture.

The industry is rotating aggressively toward the AI as a Service (AaaS) framework. For two decades, the SaaS contract was straightforward: vendors delivered passive digital workspaces, structured databases, and user interfaces, while clients provided the human labor to operate them. Today, the AaaS business model delivers the labor itself.

Treating AI as experimental tooling is no longer a viable strategy. Transitioning from SaaS to AaaS requires treating digital agents as a direct cost of goods, fundamentally altering operational economics and resource allocation.

How We Tested: TheAIAura Methodology

To evaluate the reality of the AaaS transition beyond theoretical frameworks, our engineering team conducted a 30-day technical audit of leading cloud intelligence platforms.

We bypassed standard user interfaces and tested directly at the API layer, deploying enterprise-grade workflows across environments to evaluate performance, directly comparing models like Claude 3.5 Sonnet and OpenAI’s ChatGPT-4o.

Our assessment isolated specific technical vectors:

- Token Economics: We measured inference costs across 5 million input/output tokens to calculate true run costs against traditional human labor hours.

- Context Window Integrity: We stress-tested 1-million-token context windows with dense legal and financial datasets, utilizing the “Needle In A Haystack” framework to measure retrieval degradation.

- Agentic Reasoning: We deployed custom Python environments to test multi-step reasoning loops, intentionally injecting mid-process errors to evaluate autonomous self-correction capabilities.

Core Comparison: Evaluating the Engines of AaaS

The difference between SaaS and AaaS is best understood by examining the underlying cognitive infrastructure driving these new services.

Reasoning and Autonomy

SaaS relies on a rigid, deterministic “Request-Response” model. A human clicks a button, and the system executes a pre-coded script. AaaS utilizes a probabilistic “Reason-Act” loop. When given a complex goal, the underlying model assesses the environment, formulates a multi-step plan, writes the necessary tool-calls, and executes the sequence. If an API call fails mid-task, the system autonomously reasons through an alternative path.

Coding and Execution

Legacy software requires manual integration builds. Modern AaaS platforms feature native code execution environments. Instead of outputting text for a developer to copy, these systems generate Python scripts, execute them in a secure sandbox, analyze the output, and return the final mathematical or analytical result directly to the user workflow.

Context Window and Memory

SaaS platforms maintain state via highly structured relational databases. Data must be perfectly formatted. Modern AaaS systems leverage massive context windows (frequently exceeding 1 million tokens) combined with Retrieval-Augmented Generation (RAG). They can ingest unstructured data—entire codebases, years of financial PDFs, or raw customer logs—and instantly apply that specific context to a decision without requiring SQL queries.

Speed and Multimodal Processing

Early AI models suffered from high latency, making real-time service impossible. Current production models deliver time-to-first-token (TTFT) in milliseconds, natively processing audio, visual, and text inputs simultaneously. This allows AaaS to handle real-time customer voice support or video-based quality assurance dynamically.

Performance Benchmarks: The Infrastructure Layer

The transition to AaaS requires an understanding of the underlying infrastructure costs. The following table aggregates our findings on current commercial API performance.

| Model Tier | Primary Use Case | Context Limit | Avg. Input Cost (per 1M) | Avg. Output Cost (per 1M) | Latency (TTFT) |

|---|---|---|---|---|---|

| High-Tier (e.g., Opus / GPT-4o) | Complex reasoning, agentic orchestration, code generation. | 128k – 200k | $15.00 | $75.00 | ~400ms |

| Mid-Tier (e.g., Sonnet / Gemini Flash) | High-volume data processing, RAG pipelines, text analysis. | 200k – 1M | $3.00 | $15.00 | ~200ms |

| Small Language Models (SLMs) | Edge deployment, highly specific routing tasks, localized execution. | 8k – 32k | < $0.50 | < $1.00 | < 100ms |

Pricing and API Economics: The End of the Seat License

The most disruptive element of the AaaS model is the collapse of per-user pricing. SaaS models assume human labor is required to extract value, hence charging per “seat.” AI as a Service decouples software value from human headcount.

Takeaway: Enterprises are abandoning access-based software contracts in favor of Outcome-Based Pricing (OBP).

If a digital agent resolves a tier-1 IT ticket autonomously, the vendor charges a flat fee for the resolution (e.g., $0.90 per successful ticket). If an agent conducts a compliance audit, the cost is tied to compute tokens consumed rather than a monthly subscription for the auditing software. This shifts the financial risk of software failure entirely from the buyer to the vendor.

The Depth vs. Velocity Framework: Real-World Use Cases

To categorize AaaS implementation, we developed the Depth vs. Velocity Framework. Legacy SaaS optimizes strictly for execution velocity along human-defined paths. AaaS optimizes for cognitive depth, managing high-variance edge cases without human intervention.

For Developers:

Engineering teams no longer use SaaS tools simply to track bugs. AaaS platforms monitor repository pull requests, autonomously identify security vulnerabilities in the new code, generate the patch, and submit a secondary pull request for human review. This cuts cycle times by minimizing routine debugging overhead.

For Enterprise IT and Support:

Customer service has moved past decision-tree chatbots. Modern AaaS systems integrate deeply with ERP and CRM databases. An agent can authenticate a user, read their contract history, issue a service credit, and update the financial ledger in one continuous sequence, handling 80% of tier-1 and tier-2 requests autonomously.

For Marketers and Content Teams:

Instead of using software to schedule human-written posts, AaaS pipelines ingest raw product specifications, analyze current competitor SEO gaps, generate multimodal content, and deploy campaigns across channels, continually adjusting the strategy based on real-time engagement data.

Strengths & Weaknesses of AaaS Deployment

| Factor | Strengths | Vulnerabilities |

|---|---|---|

| Scalability | Asynchronous task execution allows instant scaling without linear hiring. | Uncapped token consumption can lead to severe budget overruns if poorly governed. |

| Data Utilization | Processes unstructured, multimodal data across siloed environments seamlessly. | High risk of data poisoning or compliance breaches without strict boundary controls. |

| Workflow Friction | Collapses complex, multi-tool workflows into single natural language intents. | “The Reliability Gap”—a 5% hallucination rate is unacceptable in critical production workflows. |

Frequently Asked Questions (FAQ)

- What is the core difference between SaaS and AaaS?

SaaS provides digital tools that require human operation, utilizing a deterministic request-response architecture. AaaS provides autonomous digital labor that executes tasks independently, utilizing probabilistic reason-act loops. - Will AaaS eliminate traditional SaaS companies?

Not immediately, but it will force consolidation. Legacy SaaS platforms that fail to build proprietary AI-native execution layers will be relegated to commoditized database infrastructure, losing user-facing relevance. - How do you calculate ROI on AI as a Service?

Investors and CFOs measure Return on AI Investment (ROAI) by calculating the direct labor hours saved and workflow cycle time reduction against the raw API token and compute costs required to run the agentic system. - What is an “AI Wrapper” versus an AI-Native company?

An AI wrapper simply puts a basic user interface over a public foundational model API, lacking defensibility. An AI-native company embeds custom models, proprietary data flywheels, and specific industry workflow integration that cannot be easily replicated by a generic model update. - How does data privacy work in an AaaS model?

Enterprise AaaS requires strict orchestration. This involves deploying models within virtual private clouds (VPCs), utilizing zero-retention API agreements, and implementing robust access controls to ensure models do not train on proprietary company data.

Final Verdict: The Strategic Mandate

The transition from SaaS to AaaS requires distinct strategic realignments based on organizational role:

- For Startup Founders: Building horizontal workflow tools is a dead end. Success requires developing deeply integrated, highly verticalized AaaS platforms targeting specific, regulated industries (e.g., medical auditing, structural engineering analysis) where proprietary data creates a defensible moat against broad foundational models.

- For Enterprise Executives: You must shift pilot programs from isolated internal tools to outward-facing profit engines. Reallocate budgets from underutilized SaaS seat licenses to compute infrastructure and orchestration hubs.

- For Developers: Prioritize skills in system architecture, LLM orchestration, and deterministic output validation over traditional front-end application development.

Forward-Looking Insight: The 2026 Landscape

As the ecosystem matures, foundational AI models are transitioning from discrete applications into full operating systems. These intelligent layers will dictate the underlying architecture of the enterprise tech stack.

The primary constraint on this expansion will not be financial capital or software limitations, but physical infrastructure. The computational demands of continuous reasoning loops are pushing data centers toward a “gigawatt ceiling.”

Consequently, the next phase of AaaS engineering will pivot heavily toward efficiency—deploying fleets of highly specialized, low-power Small Language Models (SLMs) coordinated by a central orchestrator to manage costs and grid limitations. Businesses that master this specific orchestration of digital labor will define the next decade of enterprise economics.