Exploring AI, One Insight at a Time

The AI Adoption Illusion: Why Most Companies Are Doing It Wrong

The Era of Subsidized Experimentation is Dead

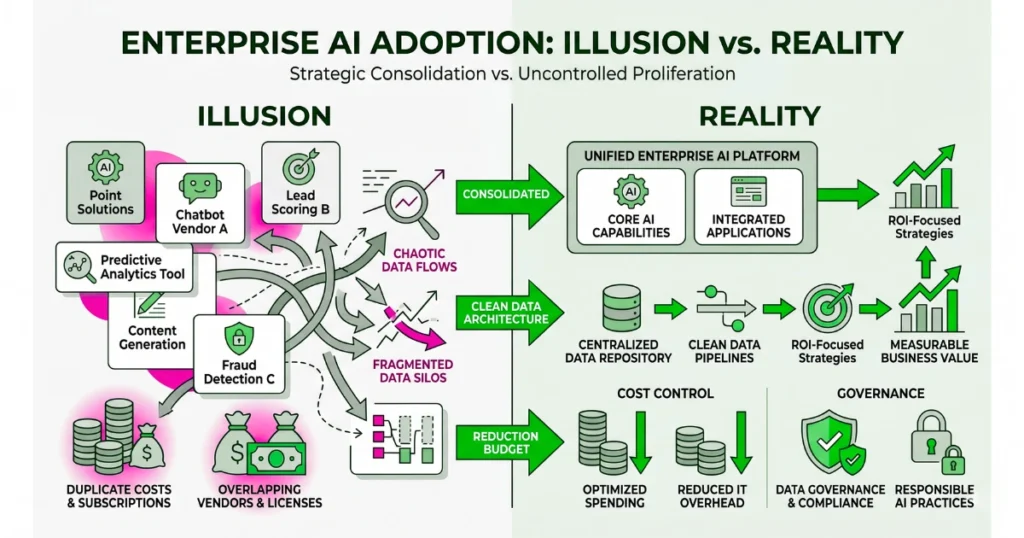

Ninety-five percent of enterprise deployments are burning cash with zero measurable return.

CIOs spent the last three years authorizing overlapping micro-subscriptions for generative go-to-market tools and internal copilots just to avoid looking outdated.

This created an artificial economy of low-friction proofs of concept where multiple startups were testing identical LLM wrappers on the exact same corporate datasets.

The resulting vendor sprawl has become unmanageable. This has led directly to the current boardroom mandate: strip the experimentation budget down to the studs and reallocate capital only to systems proving hard financial returns.

The contraction is happening right now.

Shadow IT Now Carries Massive Compute Penalties

The incentive to experiment was high when monthly switching costs appeared low, but infrastructure teams are now uncovering the true operational tax of fragmented tooling.

We saw this at a mid-sized logistics firm last month when an orphaned “AI SDR” agent in the marketing department lost its connection to the central CRM.

The script fell into an uncaught retry loop against an unindexed Pinecone vector database, executing 400,000 bad queries and racking up a $14,200 AWS API bill over a long weekend before operations finally killed the namespace.

Mission-critical software does not fail silently.

Revenue without an infrastructure narrative is just a feature. Narrative without $1 million to $2 million in proven annual recurring revenue is vaporware.

Executive Cover for Operational Bloodletting

A dangerous psychological game is playing out in earnings calls and all-hands meetings this quarter. Boards are aggressively trimming workforces and cutting core operational budgets to correct for broader macroeconomic mistakes made during previous years.

Instead of admitting operational bloat, executives are citing increased artificial intelligence investment as the public justification for the layoffs, using these vendor integration roadmaps as a convenient scapegoat to mask the cuts.

Expect budgets to consolidate around a microscopic fraction of vendors.

If your product cannot mathematically prove it replaces a specific human workflow or permanently reduces cloud infrastructure costs, it will be cut by Q3.

The enterprise software market is bifurcating into a handful of foundational winners taking the lion’s share of the budget, leaving the rest to face flattening revenue and eventual insolvency.

Startups building features disguised as companies will run out of runway by December.