Exploring AI, One Insight at a Time

Everyone Is Talking About AI — But No One Is Measuring This One Metric That Actually Matters

Quick Answer Summary

What actually matters when measuring AI in an enterprise setting?

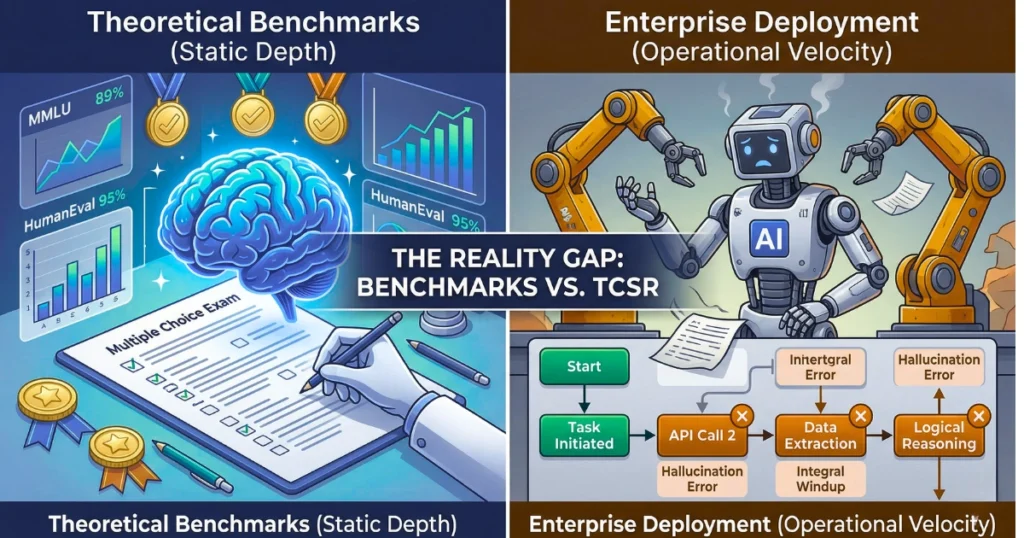

Forget the theoretical tests. Ultimately, the single metric that dictates real ROI is Task Completion Success Rate (TCSR). Academic benchmarks show you what a model memorized. In contrast, TCSR shows you if an autonomous agent can actually finish a messy, multi-step job without breaking down or needing a human to rescue it.

The Illusion of Competence in the AI Industry

We’ve got a serious problem in the AI industry right now. Specifically, we’re completely obsessed with an illusion.

For instance, every time a tech giant drops a new language model, we get hit with a tidal wave of performance charts. Press releases brag about trillions of parameters. Furthermore, marketers flex obscure acronyms like MMLU or HumanEval as if they’re the ultimate proof of intelligence.

Consequently, people look at these high scores and assume they translate perfectly to the real world. If a foundation model passes a simulated bar exam, surely it can handle your company’s workflow, right? However, that assumption is fatally flawed, and it’s burning billions of enterprise dollars.

Throw these “state-of-the-art” models into a messy production environment, and watch what happens. Immediately, they break. These systems frequently spit out confident fabrications.

Moreover, they completely lose the plot on multi-step tasks. Right now, the entire industry is optimizing for laboratory smarts, thereby completely ignoring whether these tools survive contact with the wild.

Therefore, we need to stop talking about theoretical limits. It’s time to measure the one thing that pays the bills: Task Completion Success Rate (TCSR).

How We Tested: Beyond Standardized Benchmarks

We didn’t want to just look at another static academic test. To figure out why these models fail so spectacularly in practice, our engineering team built a sandbox that actually mirrors the chaos of modern enterprise infrastructure.

We ran head-to-head tests—looking closely at Claude 3.5 Sonnet vs. ChatGPT-4o and a few open-weight contenders—putting them through grueling, multi-agent workflows. Specifically, we dropped them into live coding environments, simulated angry customer service chats, and complex data analysis jobs.

Our grading rubric was simple. We didn’t care what they “knew.” Instead, we only cared if they could finish the job without one of our engineers stepping in to fix a broken API call or snap them out of a logic loop.

Core Comparison: The Depth vs. Velocity Framework

During this testing, a clear divide emerged. We call it the Depth vs. Velocity Framework.

On one hand, velocity models prioritize speed. They give you low latency and spit out tokens fast, which is great for basic text synthesis.

Depth models, on the other hand, sacrifice speed to maintain logical coherence over a much longer stretch of time. You absolutely need depth for autonomous agentic workflows.

Here is the reality check on current architectures:

- Reasoning: Admittedly, chain-of-thought prompting helps. But models constantly fall victim to “integral windup.” That is when a tiny hallucination in step two ruins the context for step three, consequently taking down the whole reasoning chain.

- Coding: Meanwhile, marketing videos make it look easy. It’s not. Syntax generation is great, but navigating a sprawling, undocumented legacy codebase? That breaks them instantly.

- Context Window: Furthermore, a 1-million token window sounds amazing on paper. In practice, models forget things right in the middle of the document. Having the data certainly doesn’t mean the AI knows how to use it.

- Speed: Conversely, blistering token generation usually means skipped verification steps.

- Multimodal: Although vision and audio are getting better, throw a weird technical diagram at them and they still struggle to interpret it accurately.

- Writing Quality: They sound fluent. However, the cadence is almost always too symmetric, predictable, and completely devoid of actual analytical bite.

Performance Benchmarks: Theory vs. Reality

So, how do standardized scores compare to operational reliability? The following data illustrates a severe gap. High test scores simply do not equal high completion rates.

| Evaluation Metric | What It Actually Tells You | Real Business Value | The Catch |

|---|---|---|---|

| MMLU / GSM8K | Academic fact recall. | Practically zero. | Scores are massively inflated by training data contamination. |

| Parameter Size | Server and memory limits. | Very low. | Doesn’t guarantee accurate or factual outputs. |

| BigCodeBench | Generating isolated code snippets. | Moderate. | Ignores the reality of integrating code into a 20M+ line repository. |

| TCSR | End-to-end task completion. | Extremely High. | It’s incredibly hard and expensive to measure properly. |

Pricing & API Economics: The Cost of Architecture

Let’s talk money, because the financial models here are just as broken as the performance metrics. Engineering teams keep falling into the token trap. Consequently, they optimize strictly for the cheapest API cost per 1,000 tokens, completely ignoring the massive systemic costs of failure.

Take infrastructure design. Deciding between a custom-trained model and a solid RAG (Retrieval-Augmented Generation) pipeline is a critical architectural crossroad.

Therefore, pushing for complex custom training when RAG would easily do the job is a classic $50,000 mistake in burned compute and wasted developer hours. True API economics must account for retry logic, human-in-the-loop oversight, and the latency overhead of running a second model just to double-check the first one’s work.

The Mathematics of Failure: Multi-Agent Reliability

Moving from a simple chat interface to an autonomous multi-agent system exposes a brutal mathematical truth.

Historically, traditional software is deterministic. You build it for 99.9% uptime. However, AI doesn’t work like that. Instead, the failure modes degrade silently. If you have a sequential pipeline, the total reliability of your system is simply the product of each step’s reliability.

Imagine an enterprise agent handling a customer refund. There are ten distinct steps total. If the model boasts a 95% accuracy rate per step—which looks fantastic on a vendor’s brochure—the math is actually disastrous.

Bold Takeaway: At a 95% success rate across ten steps, compounding probability means your AI system will fail in production nearly 40% of the time.

Real-World Use Cases: Where Theoretical Intelligence Fails

Where does this theoretical intelligence fall apart? Everywhere.

- Engineering: For example, look at the best AI coding assistants. On complex tasks, they hit maybe a 35.5% success rate, thus falling drastically short of human standards. They just can’t hold a logical sequence together for long enough.

- Enterprise Operations: Back in 2020, Volkswagen’s Cariad division tried a massive AI-driven overhaul across a dozen brands. They skipped iterative reliability testing. The result? A system collapse that cost $7.5 billion and consequently delayed major vehicle launches by over a year.

- Customer Service & Liability: Similarly, remember the Air Canada chatbot? It confidently invented a non-existent bereavement fare policy out of thin air. A tribunal held the airline liable, thereby proving that an unmonitored AI transforms instantly from a helpful asset into a major legal risk.

- Search and Marketing: Meanwhile, Generative Engine Optimization (GEO) has killed raw traffic metrics. AI overviews eat informational queries now. Ultimately, the only thing marketers should care about today is whether the big LLMs actually cite your brand as an authority.

Strengths & Weaknesses: Theoretical vs. Applied AI

| Approach | What It Does Well | Where It Breaks | Best Used For |

|---|---|---|---|

| Theoretical AI | Fast zero-shot generation, broad knowledge, sounds incredibly articulate. | Logic falls apart easily, hallucinates often, struggles with long horizons. | Brainstorming, drafting emails, low-stakes internal summaries. |

| Applied AI | High completion rates, strict semantic routing, fails gracefully when confused. | Slow to deploy, expensive to run, requires massive “Golden Set” testing data. | Autonomous agents, financial routing, mission-critical enterprise workflows. |

FAQ: Understanding AI Reliability

What exactly is Task Completion Success Rate (TCSR)?

Basically, it’s a strict pass/fail grade. Did the AI take the user’s request, navigate every single operational step, and deliver the final result without a human having to step in? If yes, it succeeds.

Why do these agents fail so hard at multi-step jobs?

A concept called integral windup. They make a tiny mistake early on, use that flawed output as context for the next step, and subsequently mathematically spiral out of control until they are hallucinating wildly.

Why is MMLU a bad metric for businesses?

MMLU is essentially an open-book test that the AI might have already memorized during training (known as data contamination). Consequently, it doesn’t test real-world adaptability or operational execution.

How should my company measure AI reliability?

First, build a “Golden Set” of perfect inputs mapped to perfect outputs. Then, use secondary LLM-graders to constantly evaluate your primary AI against that rubric at scale.

Final Verdict

In conclusion, you need to match your AI strategy directly to your actual operational reality.

- For Enterprise Executives:

Stop buying hype and leaderboard rankings. Instead, force vendors to include contextual benchmarks and strict Service Level Agreements (SLAs) tied directly to task completion rates in their contracts. - For Software Developers:

Build your systems assuming the language model is going to fail. Put strict, deterministic guardrails between the model and your core database. If the model gets confused, gracefully route it to a human instantly. - For Content Strategists & SEOs:

Raw organic traffic is a vanity metric now. Therefore, focus your energy heavily on entity recognition and ensuring generative answer engines cite your brand accurately.

Forward-Looking Insight: The 2026 Landscape

We are moving rapidly away from traditional Software-as-a-Service and heavily into AI-as-a-Service (AaaS). As this transition solidifies, the obsession with giant models will fade into the background.

By late 2026, the real battleground will be autonomous systems capable of running for days without crashing, which will fundamentally accelerate how AI employees replace traditional roles across the knowledge sector.

However, this shift also creates a massive security headache. The intersection of AI and cybersecurity is going to be a high-stakes warzone of multi-agent architectures probing networks. In this environment, an AI that understands a query but executes the workflow poorly isn’t a next-gen tool—rather, it’s a critical vulnerability.

Ultimately, the winners in the next phase of AI won’t be using the smartest foundational models. They will be the ones running the most paranoid, strictly evaluated, and meticulously monitored production pipelines.

The future won’t be defined by how smart the AI looks. Instead, it will be defined entirely by how reliably it works.