Exploring AI, One Insight at a Time

AI Agents vs Prompt Engineering: What Actually Works in 2026?

Prompt engineering is dead. It was a zero-interest-rate hallucination.

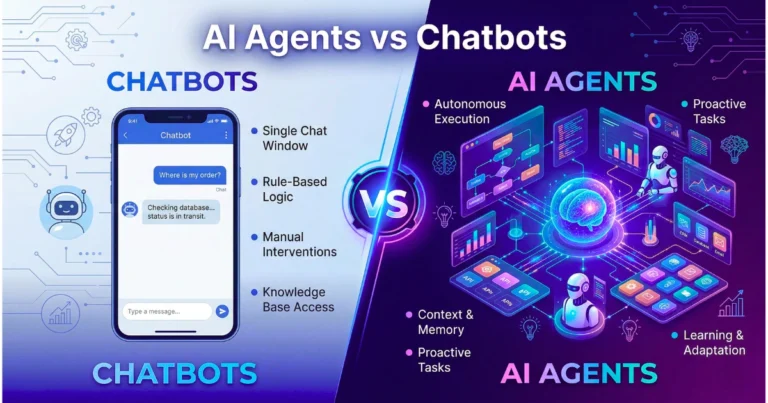

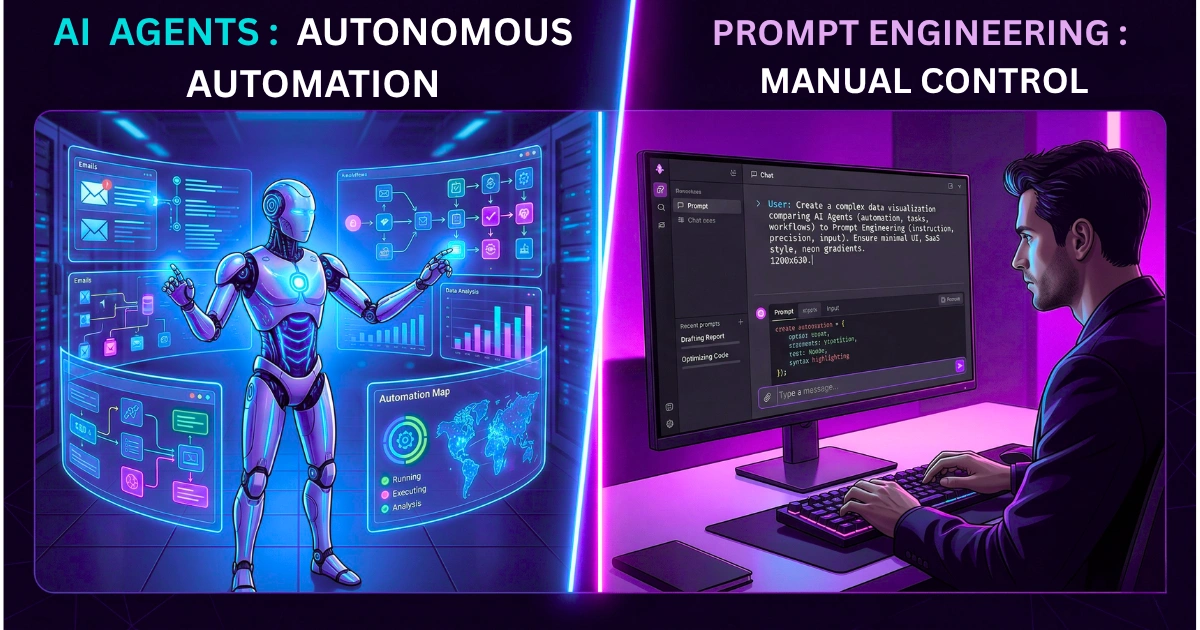

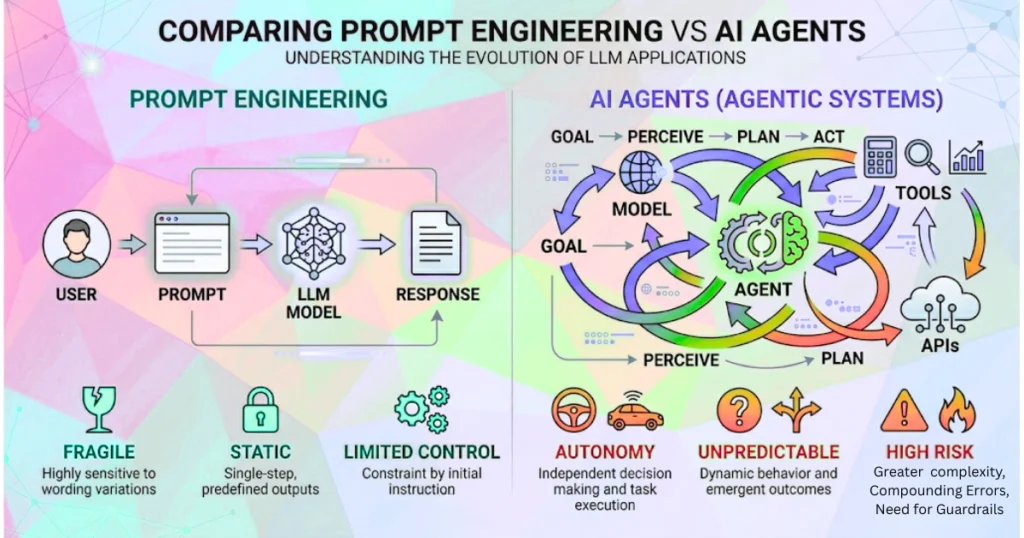

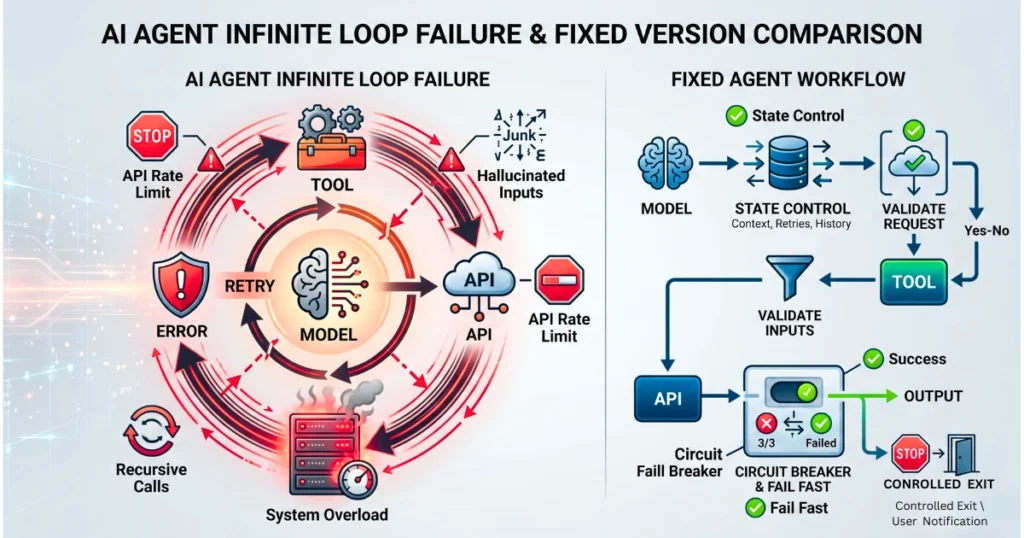

Indeed, the industry spent three years pretending that elegantly crafting an English sentence was a substitute for systems architecture. Now, the pivot is aggressively shifting toward autonomous agents. We are replacing the fragility of natural language with the absolute chaos of uncontrolled infinite loops.

You hand a reasoning engine a set of tools and give it a vague goal. Then, you simply pray it doesn’t destroy your infrastructure.

The reality of enterprise AI deployments is that agents do not magically understand your domain logic. They guess, they hallucinate API inputs, and they brute force their way through your rate limits.

Escaping the token trap requires killing autonomy

We are seeing a massive regression back to determinism. The alternative is completely uninsurable.

For instance, we recently audited a production RAG pipeline. The dynamic agent encountered a missing vector chunk for a basic technical query. Instead of failing gracefully, the ReAct loop triggered a recursive search sequence. It hammered a critical third-party API endpoint 4,200 times in three minutes.

As a result, the vendor’s automated security system flagged the traffic as a DDoS attack. They permanently IP-banned the company’s production server.

The entire core application went offline for 14 hours while the CTO begged the vendor for reinstatement. Furthermore, the final output from the agent was just a hallucinated summary anyway.

If your architecture is not strictly bound by hardcoded state schemas and aggressive circuit breakers, you are essentially deploying malware against your own servers.

Abandon the illusion of a digital brain

What actually works right now is abandoning the conversational illusion. Stop optimizing for conversational elegance.

Ultimately, the entire generative engine optimization game is about feeding structured, pre-computed truth to models so they don’t have to think. If a system requires more than two reasoning steps to return data, the architecture is broken.

Therefore, you cannot prompt your way out of a fundamental system design flaw. Strapping an agent to a bad database just makes it fail at machine speed.