Exploring AI, One Insight at a Time

Why 80% of AI Projects Fail (And How to Stop Burning Compute)

A slick Jupyter notebook demo is the most dangerous thing in your company right now.

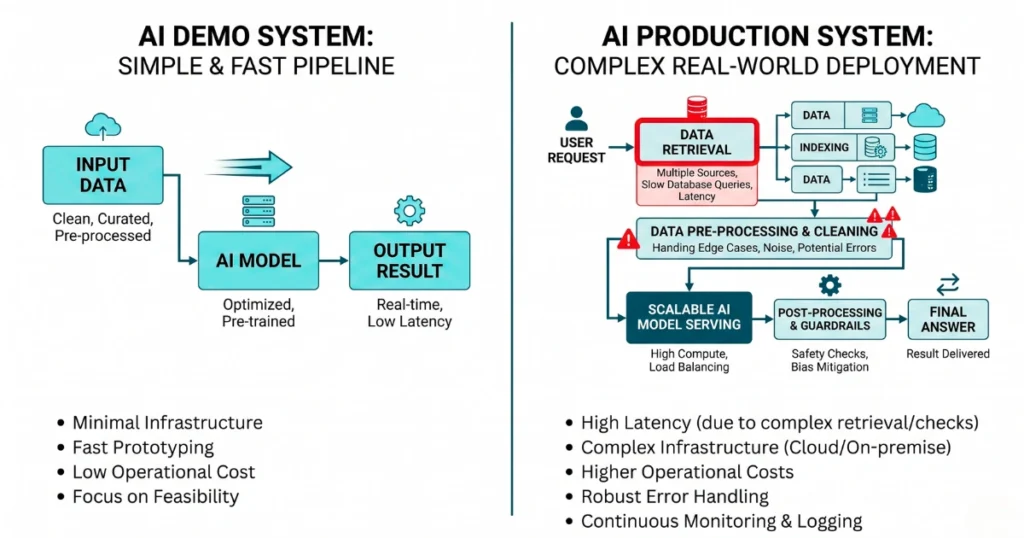

Last quarter, my engineering team showed me a flawless internal RAG pipeline. It summarized our legacy technical documentation instantly.

We high-fived, pushed it to staging, and watched the entire project implode. The vector database choked on poorly formatted enterprise PDFs. API latency spiked to 15 seconds per query. Hallucinations jumped by 30%.

But the worst part wasn’t the broken UX; it was the API bill for compute time spent processing absolute garbage. We learned the hard way that a clean local demo is completely detached from the brutal financial realities of production environments.

The API Wrapper Delusion

Building a thin UI layer over an external provider’s API is not a defensive business moat. It is a massive liability.

When OpenAI or Anthropic updates their underlying model, or simply releases your core feature for free, your product lifecycle is wiped out overnight.

You are renting intelligence without building any proprietary systemic value. If your entire business model is just “ChatGPT but with a blue dashboard,” you don’t have a tech company. You have a ticking clock.

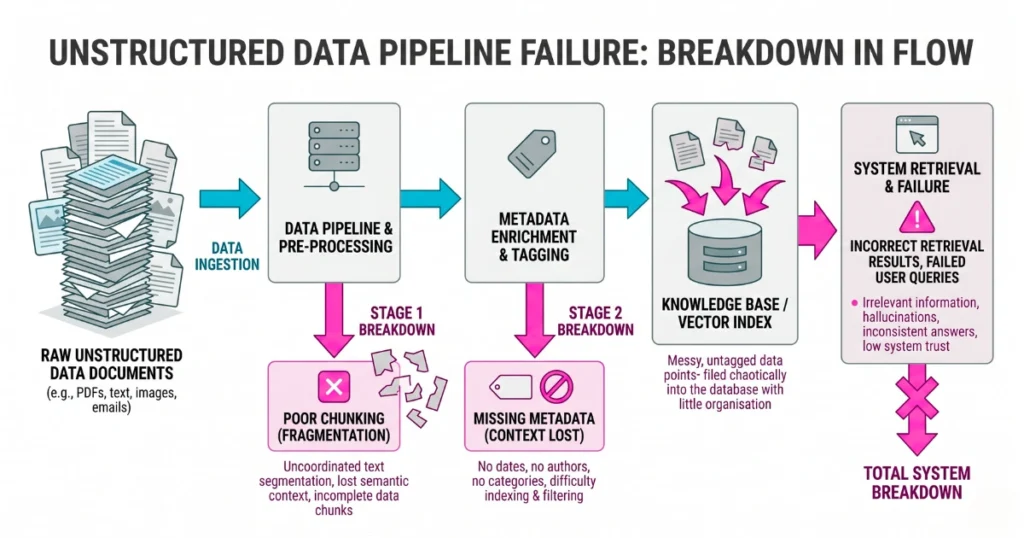

Where the Budget Actually Dies: Unstructured Data

The LLM is almost never the bottleneck. Your data hygiene is.

I see teams dumping thousands of raw, unparsed PDFs into a vector store expecting a miracle. Without aggressive chunking strategies, metadata tagging, and semantic routing, retrieval systems pull garbage context.

If your retrieval accuracy is terrible, rewriting your system prompt to say “think step by step” will not save you. You need a data engineer, not a “prompt whisperer.”

The Automation Ceiling (And Why 100% is a Lie)

Stop trying to force a model to handle 100% of a complex task.

In production, an AI system hits a hard wall at about 80% accuracy. Attempting to automate that remaining 20% edge-case territory is what causes catastrophic operational failures and massive compute burn.

The highest-ROI systems optimize for the reliable 80% and build seamless, hard-coded off-ramps to human operators for the rest.

Measuring What Actually Keeps the Lights On

Engineering teams get obsessed with the novelty of implementing the latest agent framework. As a founder, I just want to know if the system cuts my customer support overhead by a measurable percentage.

You cannot scale a project you cannot measure. Vanity metrics are entirely useless to a CFO reviewing the quarterly burn rate.

| Stop Tracking (Vanity Metrics) | Track This Instead (Hard ROI) |

|---|---|

| Total tokens generated per week | Direct human hours saved per specific task |

| Number of active internal chat users | Cost of API compute vs. Cost of manual processing |

| Model response length | Task completion rate before human intervention |

WARNING: Launching internal tools with zero guardrails is a guaranteed compliance disaster. We regularly see internal RAG tools easily tricked into exposing proprietary API keys because a developer thought “system instructions” were a substitute for actual database access controls.

The Era of “AI Magic” is Dead

AI is a rigid engineering discipline requiring intense testing and ruthless financial pragmatism.

Stop buying into vendor hype about autonomous workforces. Stop experimenting without measurement. If you aren’t running automated regression tests on your prompts against a golden dataset, you are flying blind—and your competitors, who treat AI like traditional software architecture, will happily take your market share while you try to debug your prompt.