Exploring AI, One Insight at a Time

Open vs. Closed AI Models: Which Will Actually Win in 2026?

The AI industry keeps asking the wrong question. Every week, another benchmark comparison floods social media: But the real production bottleneck in 2026 is no longer the model itself. It is orchestration. CTOs are spending months debating whether…

AI Memory Explained: Why Chatbots Forget Everything (Hidden Problem)

AI memory failures are not caused by hallucinations. The real problem is far more structural: modern AI systems still struggle to manage state reliably across complex workflows. The chatbot may appear intelligent in the moment, but underneath the…

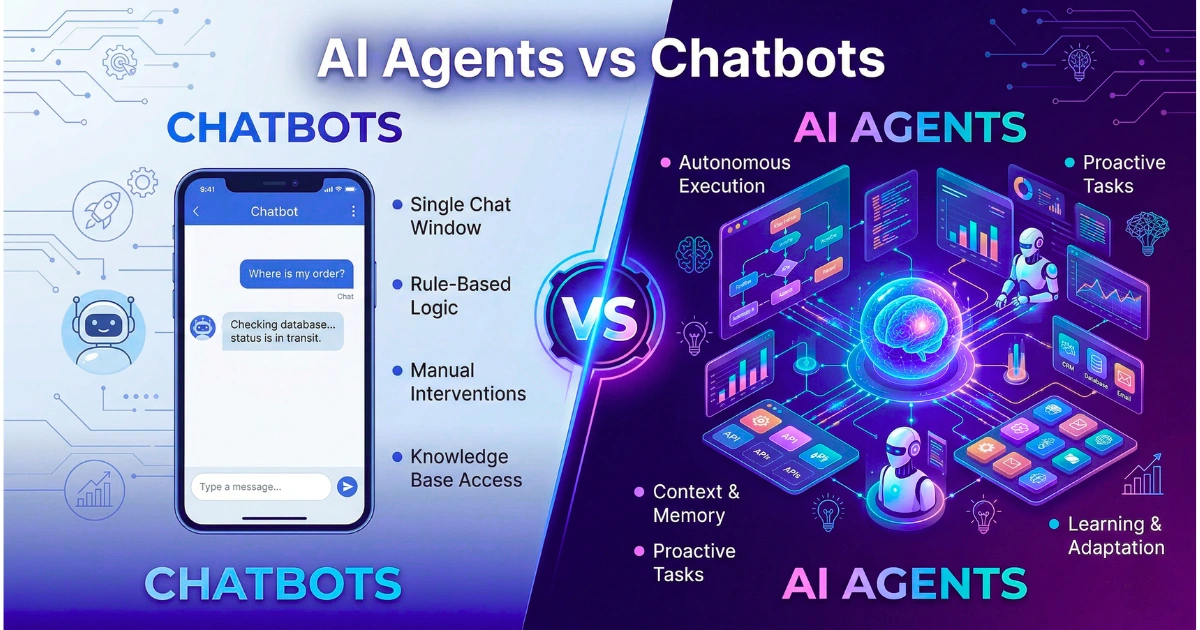

AI Agents vs Chatbots: The Real Difference Explained (2026 Guide)

Chatbots Are Glorified Search Indexes A chatbot waits for a human to type a string. It is a strictly reactive system bound by the latency of your vector database and the context window of your chosen model. Indeed,…

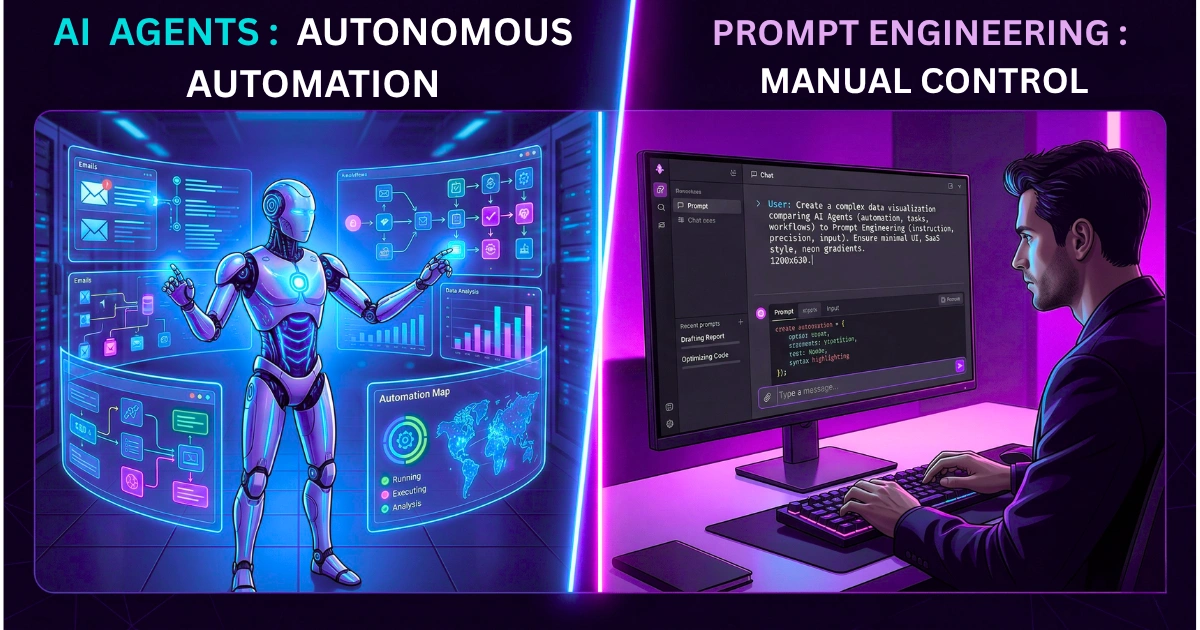

AI Agents vs Prompt Engineering: What Actually Works in 2026?

Prompt engineering is dead. It was a zero-interest-rate hallucination. Indeed, the industry spent three years pretending that elegantly crafting an English sentence was a substitute for systems architecture. Now, the pivot is aggressively shifting toward autonomous agents. We…

AI Alignment Problem Explained: Why Even Smart Models Fail

“Black Box” Outputs Void Insurance Deployments Immediately The core failure mode during the recent underwriting assistant rollout was not a hallucinated policy limit, but a fundamental inability to satisfy the National Association of Insurance Commissioners (NAIC) Model Bulletin…